This week, my coworkers and I were given a fun challenge. Using any tool or language, solve FizzBuzz! 🤓 Then present and explain the solution to the rest of the team. This was a fun challenge because our team is a mix of junior-to-senior developers and data professionals, working with everything from SQL to Python to C# to DAX to PowerShell. Those who had never solved FizzBuzz before got the chance to do so, while those who had already solved it got the chance to try again using a different tool or language.

While working on my solution, I ended up searching my own website for a post I wrote years ago: Using a Numbers Table in SQL Server. It gave me a nudge to share my FizzBuzz process and solution as well, even if there are a bazillion solutions already out there. I keep telling others to share what they do and learn, so this time I’m actually going to take my own advice. Go me! 😄

The FizzBuzz Challenge

FizzBuzz might be one of the most common programming challenges. The goal is to list all numbers from 1-100, but if a number is divisible by 3 you replace it with Fizz, if a number is divisible by 5 you replace it with Buzz, and if a number is divisible by both 3 and 5 you replace it with FizzBuzz:

- 1

- 2

- Fizz

- 4

- Buzz

- Fizz

- 7

- 8

- Fizz

- Buzz

…and so on.

Solving FizzBuzz in SQL

My first idea was to simply insert all values into a table and do a SELECT *, but I also wanted to actually solve the challenge 😂

Step 1: List all numbers from 1-100

I came up with three approaches using ROW_NUMBER() to list all numbers from 1-100.

My first approach was to query sys.all_objects:

SELECT TOP (100)

ROW_NUMBER() OVER (ORDER BY (SELECT NULL)) AS n

FROM sys.all_objects

ORDER BY n;

This felt a little too quick and dirty, though.

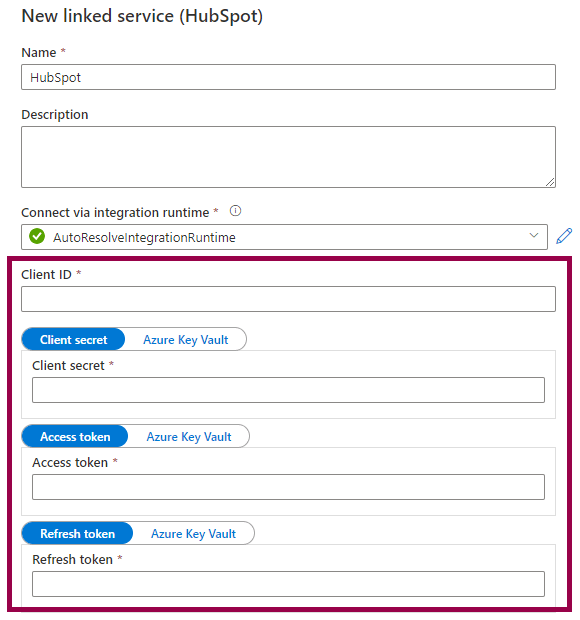

Next, I wanted to see if I could list the numbers without querying a table/view. At this point, I had the idea of a numbers table (or tally table) in my mind, since I have used and blogged about it in the past. I just couldn’t remember the syntax! 😅 So I boiled my logic down to “let’s just create 10 x 10 rows”. By using VALUES, I created two virtual tables with 10 rows (each containing the value 1), then cross joined the two tables:

SELECT

ROW_NUMBER() OVER (ORDER BY (SELECT NULL)) AS n

FROM (

VALUES (1), (1), (1), (1), (1), (1), (1), (1), (1), (1)

) AS t1(n)

CROSS JOIN (

VALUES (1), (1), (1), (1), (1), (1), (1), (1), (1), (1)

) AS t2(n)

ORDER BY n;

Finally, I looked up my numbers table syntax:

WITH

L0 AS (SELECT 1 AS n UNION ALL SELECT 1), -- 2 rows

L1 AS (SELECT 1 AS n FROM L0 AS a CROSS JOIN L0 AS b), -- 4 rows (2 x 2)

L2 AS (SELECT 1 AS n FROM L1 AS a CROSS JOIN L1 AS b), -- 16 rows (4 x 4)

L3 AS (SELECT 1 AS n FROM L2 AS a CROSS JOIN L2 AS b), -- 256 rows (16 x 16)

L4 AS (SELECT 1 AS n FROM L3 AS a CROSS JOIN L3 AS b), -- 65 536 rows (256 x 256)

L5 AS (SELECT 1 AS n FROM L4 AS a CROSS JOIN L4 AS b), -- 4 294 967 296 rows (65 536 x 65 536)

Nums AS (SELECT ROW_NUMBER() OVER (ORDER BY (SELECT NULL)) AS n FROM L5)

SELECT TOP (100)

n

FROM Nums

ORDER BY n;

Step 2: Replace numbers with Fizz, Buzz, or FizzBuzz

Once I had figured out how to list the numbers, I needed to replace the numbers with Fizz, Buzz, and FizzBuzz. The challenge here, in any programming language, is to understand how to check whether a number is divisible by another number, and to understand in which order things are evaluated.

In SQL, you can use the % (modulus) operator to return the remainder after dividing one number by another. If the result is 0, it means that it is divisible.

(Fun fact: The first time I encountered the modulus operator, I thought that it returned the digit(s) after the decimal symbol. As in, I thought that 10 % 4 would return 5 because 10 / 4 = 2.5. Imagine my surprise when it returned 2! Why 2? And then I realized that ohhh, it’s because you can only fully fit the number 4 two times inside 10, and then you have 2 remaining… What can I say, math is difficult enough in my first language! 😅)

The final step is to construct the CASE expression so that it evaluates FizzBuzz first:

WITH

L0 AS (SELECT 1 AS n UNION ALL SELECT 1),

L1 AS (SELECT 1 AS n FROM L0 AS a CROSS JOIN L0 AS b),

L2 AS (SELECT 1 AS n FROM L1 AS a CROSS JOIN L1 AS b),

L3 AS (SELECT 1 AS n FROM L2 AS a CROSS JOIN L2 AS b),

L4 AS (SELECT 1 AS n FROM L3 AS a CROSS JOIN L3 AS b),

L5 AS (SELECT 1 AS n FROM L4 AS a CROSS JOIN L4 AS b),

Nums AS (SELECT ROW_NUMBER() OVER (ORDER BY (SELECT NULL)) AS n FROM L5)

SELECT TOP (100)

CASE

WHEN n % 3 = 0 AND n % 5 = 0 THEN 'FizzBuzz'

WHEN n % 3 = 0 THEN 'Fizz'

WHEN n % 5 = 0 THEN 'Buzz'

ELSE CAST(n AS VARCHAR(3))

END

FROM Nums

ORDER BY n;

Or, if you really just want that quick and dirty solution… 😁

SELECT TOP (100)

CASE

WHEN n % 15 = 0 THEN 'FizzBuzz'

WHEN n % 3 = 0 THEN 'Fizz'

WHEN n % 5 = 0 THEN 'Buzz'

ELSE CAST(n AS VARCHAR(3))

END

FROM (SELECT ROW_NUMBER() OVER (ORDER BY (SELECT NULL)) AS n FROM sys.all_objects) AS t;

Your Turn!

How would you solve the FizzBuzz challenge? Can you think of a completely different approach? Can you fix my code and make it better or prettier? Go on, do it, it’s fun! 😃