Templates in Azure Data Factory

In the previous post, we looked at setting up source control. Once we did that, a new menu popped up under factory resources: templates! In this post, we will take a closer look at this feature. What is the template gallery? How can you create pipelines from templates? And how can you create your own templates?

Let’s hop straight into Azure Data Factory!

Using Templates from the Template Gallery

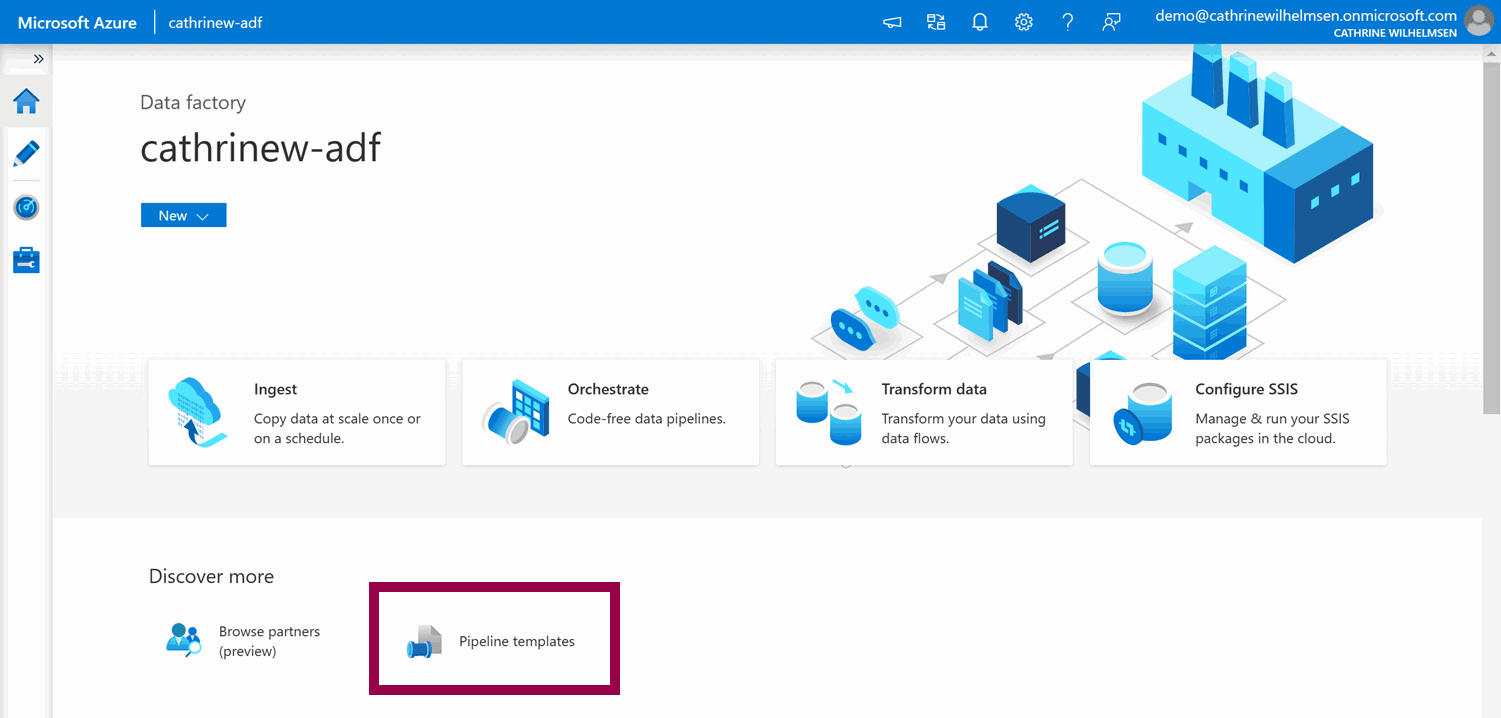

From the Home page, you can create pipelines from templates:

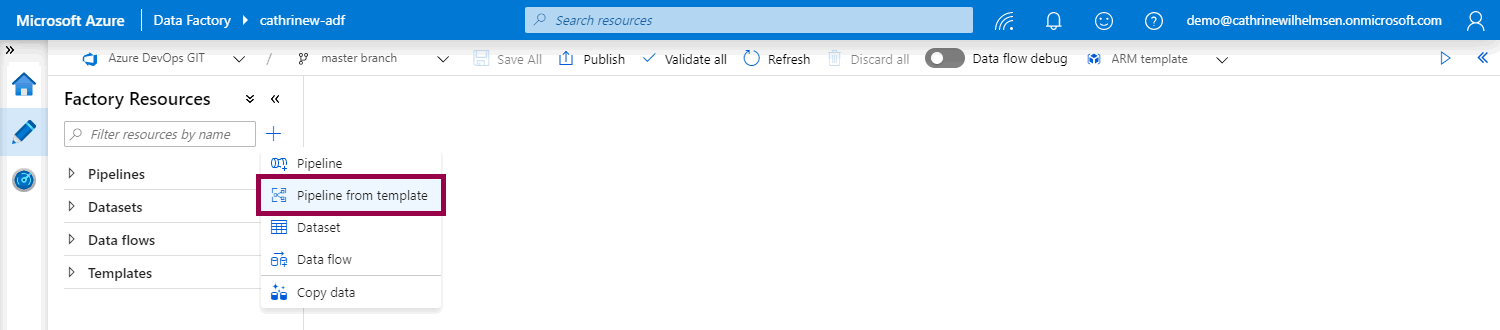

From the Author page, you can click on the pipeline actions menu, then click pipeline from template:

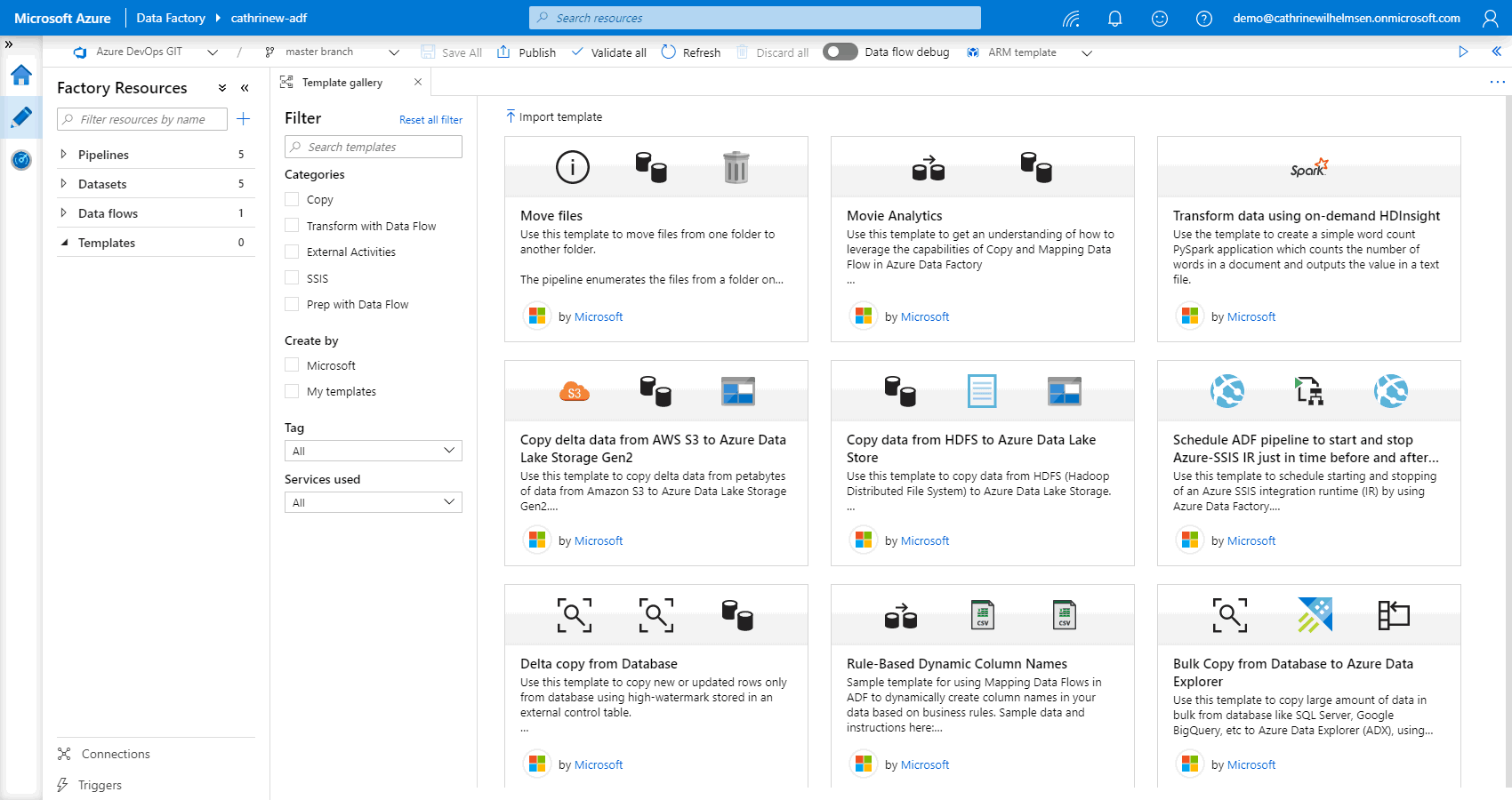

Both of these open up the template gallery, with a whole bunch of pre-defined templates and patterns:

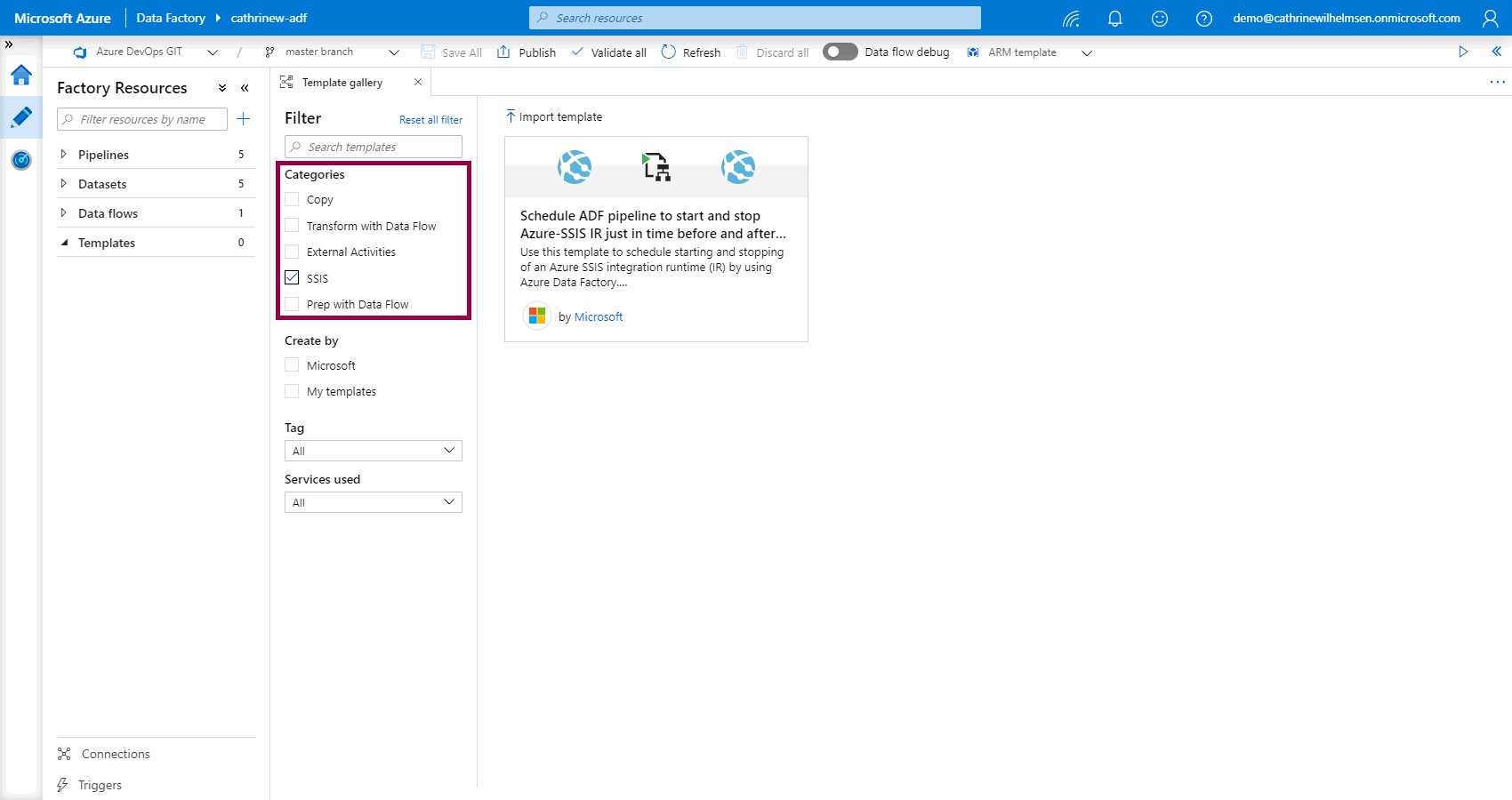

You can filter on categories…

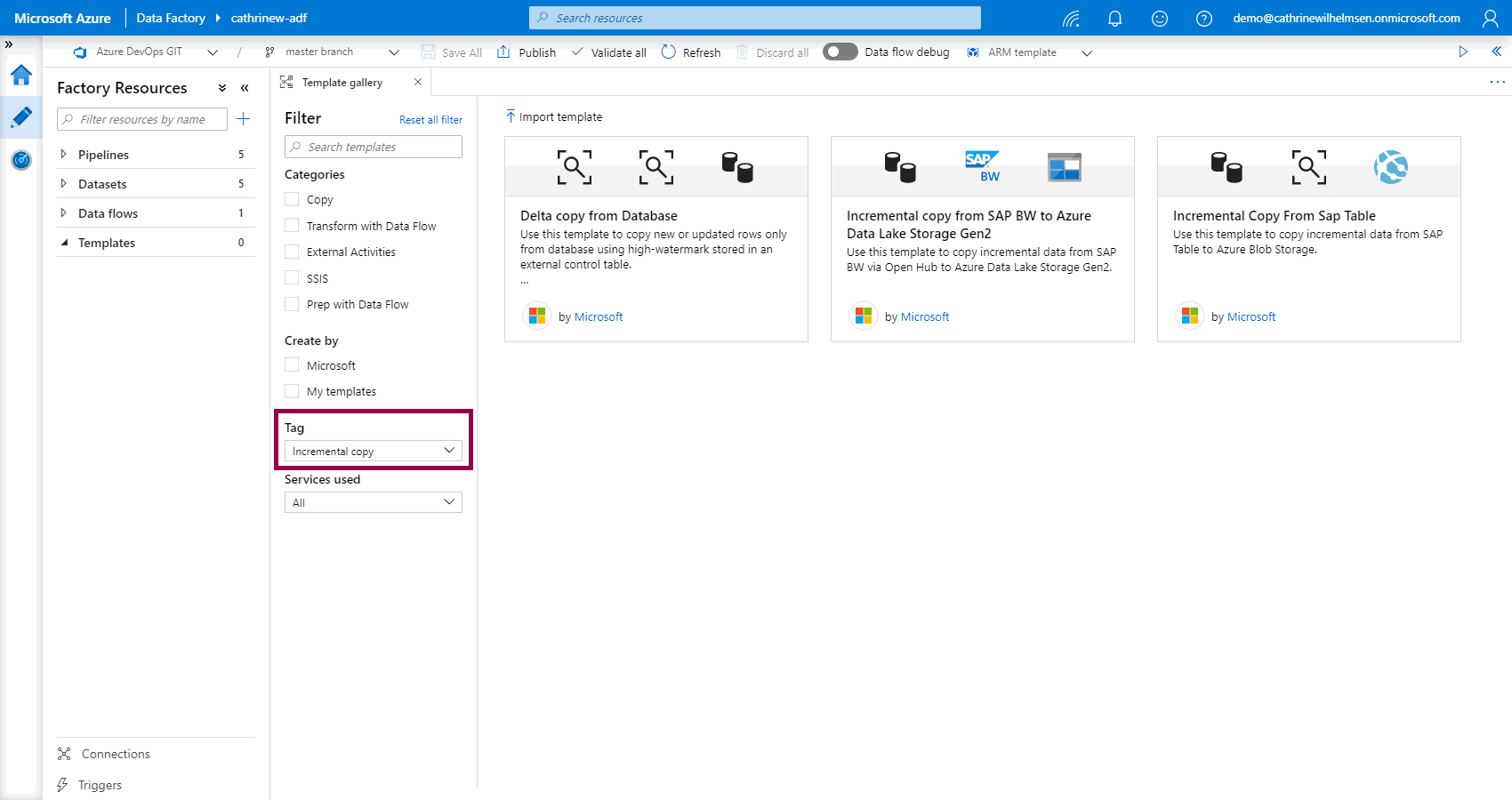

…or tags…

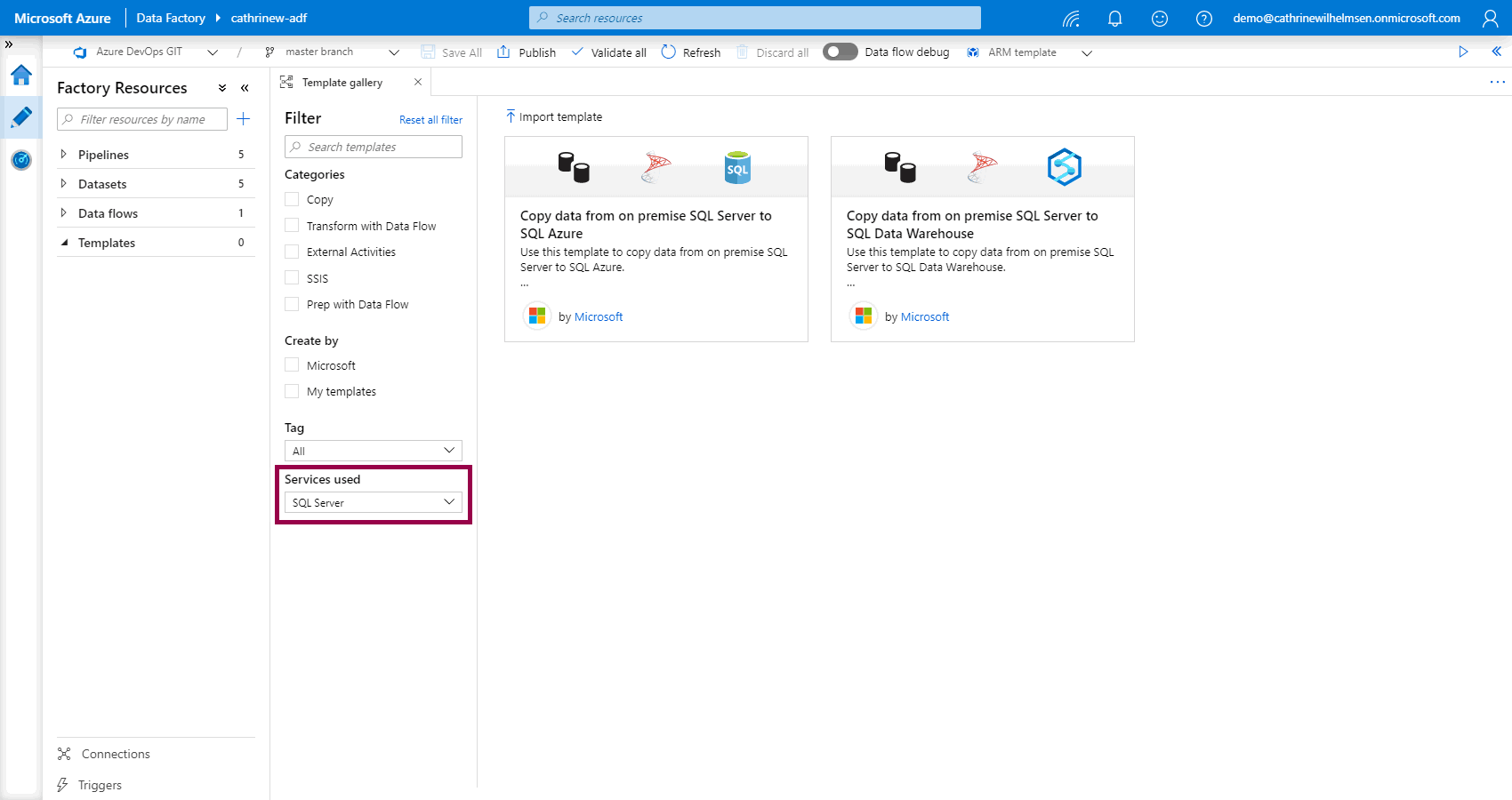

…or services…

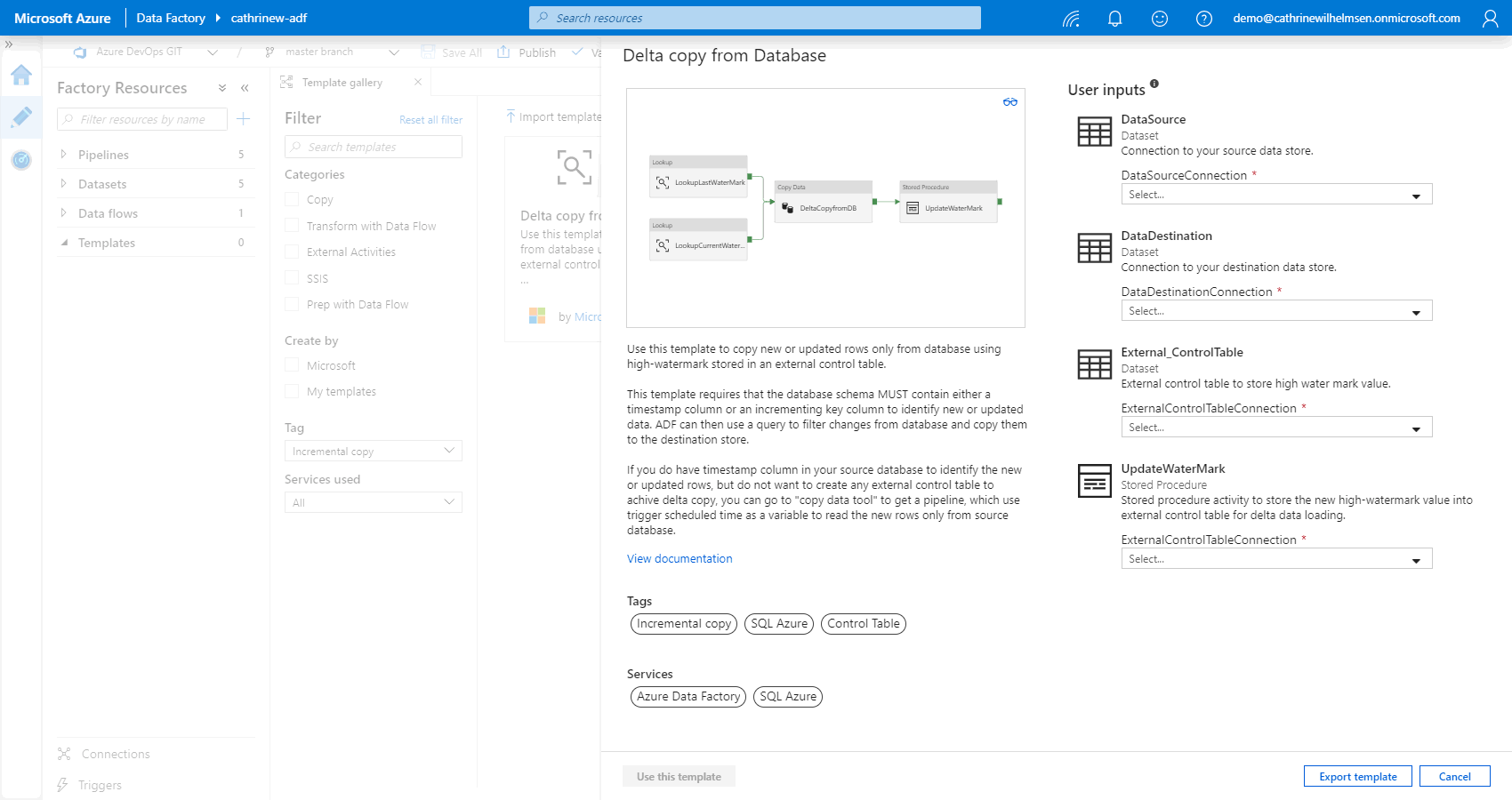

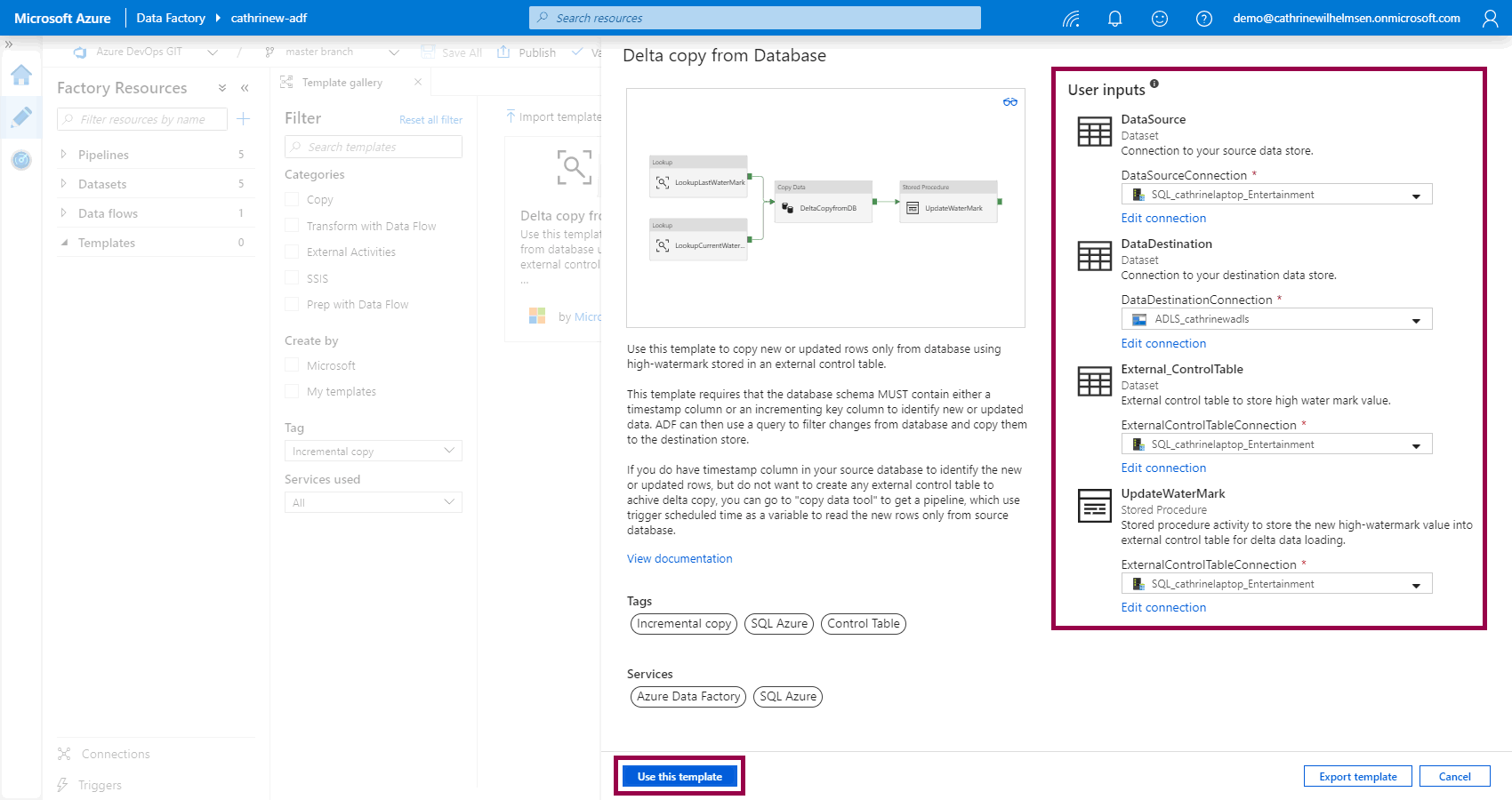

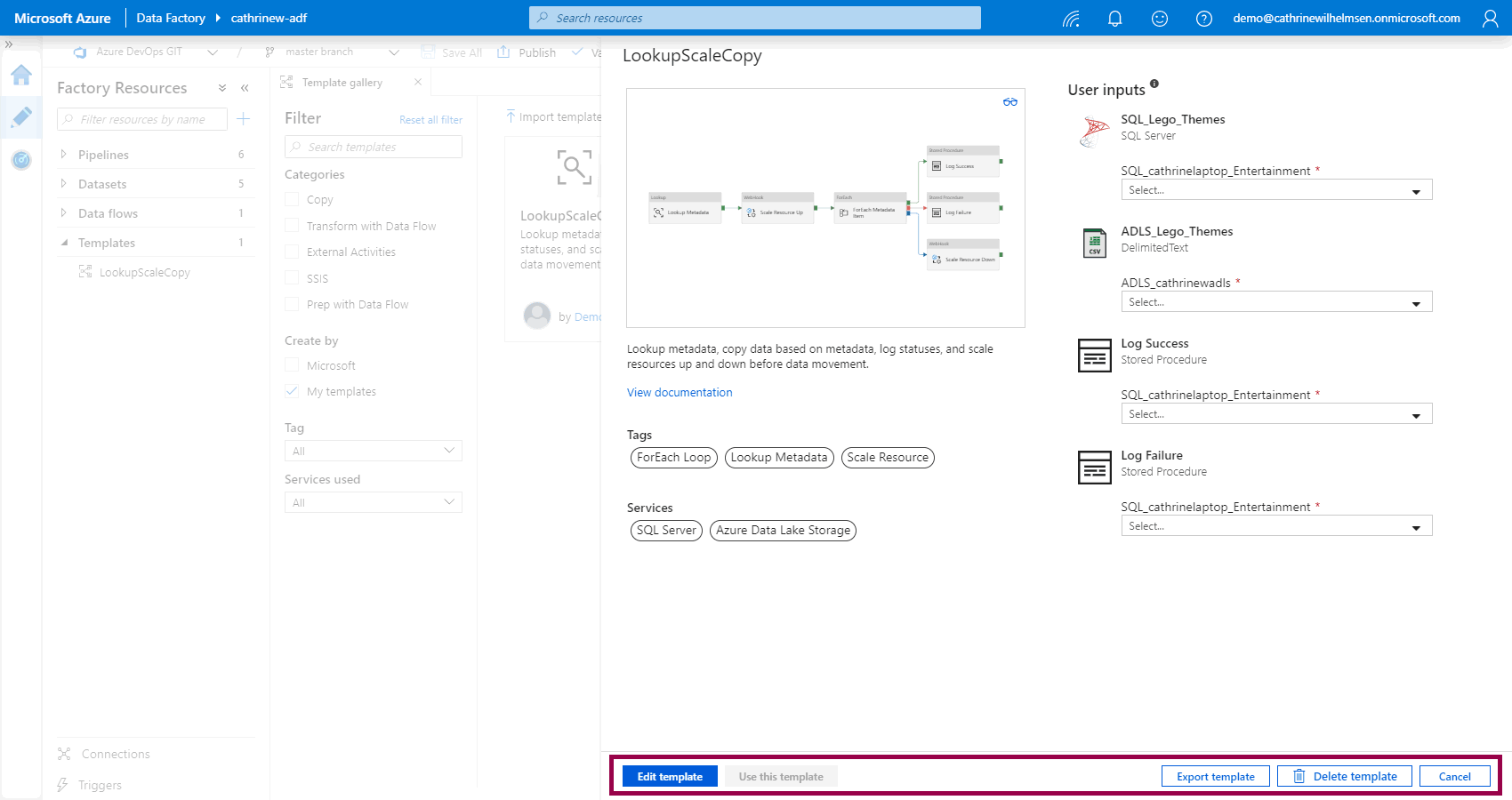

When you click on a template, you will see a preview of the pipeline, the description, tags, services, and user inputs:

In the user inputs, select the datasets you want to use, or create new ones. Then, click use this template:

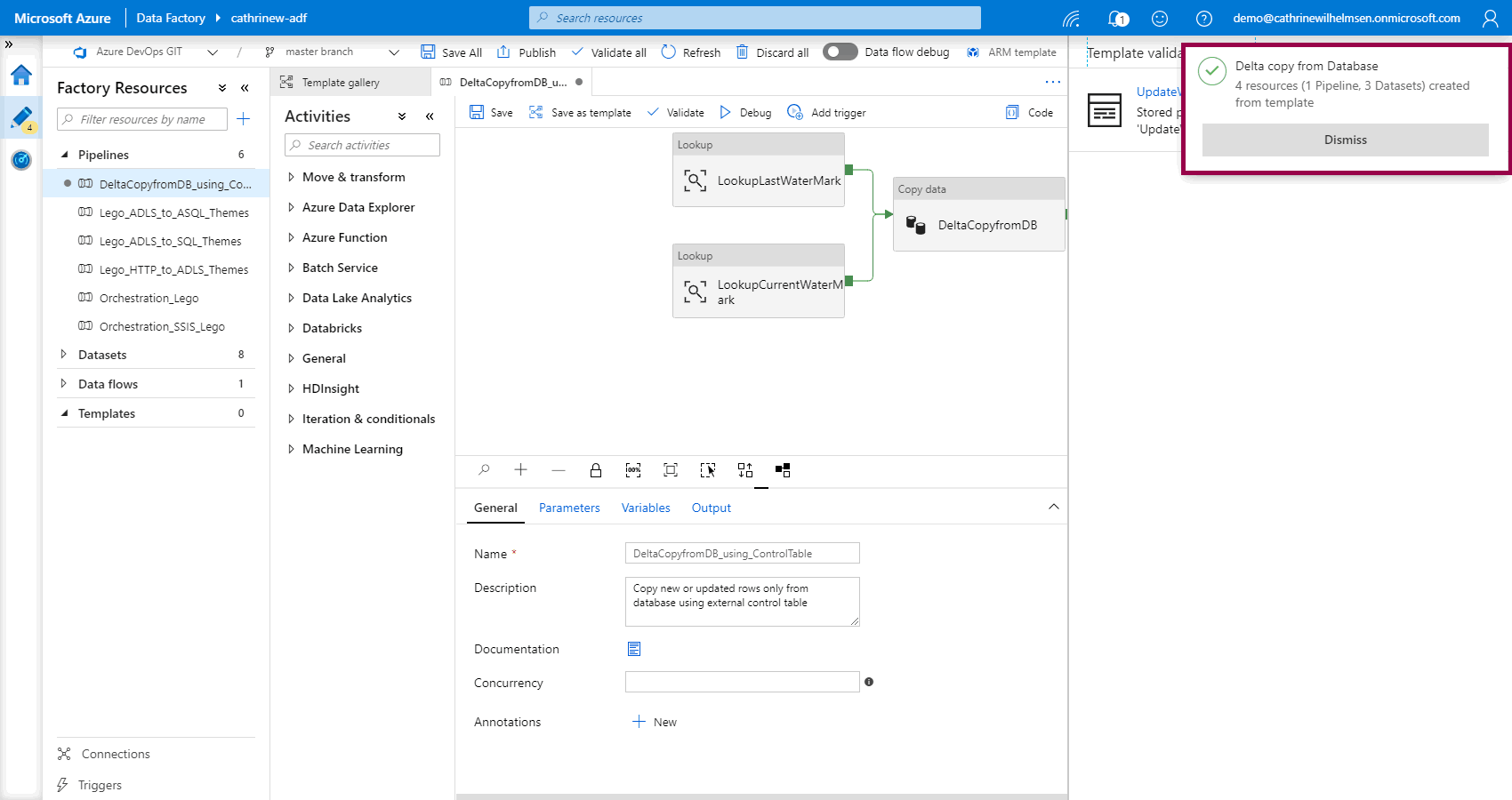

The template will create the necessary resources…

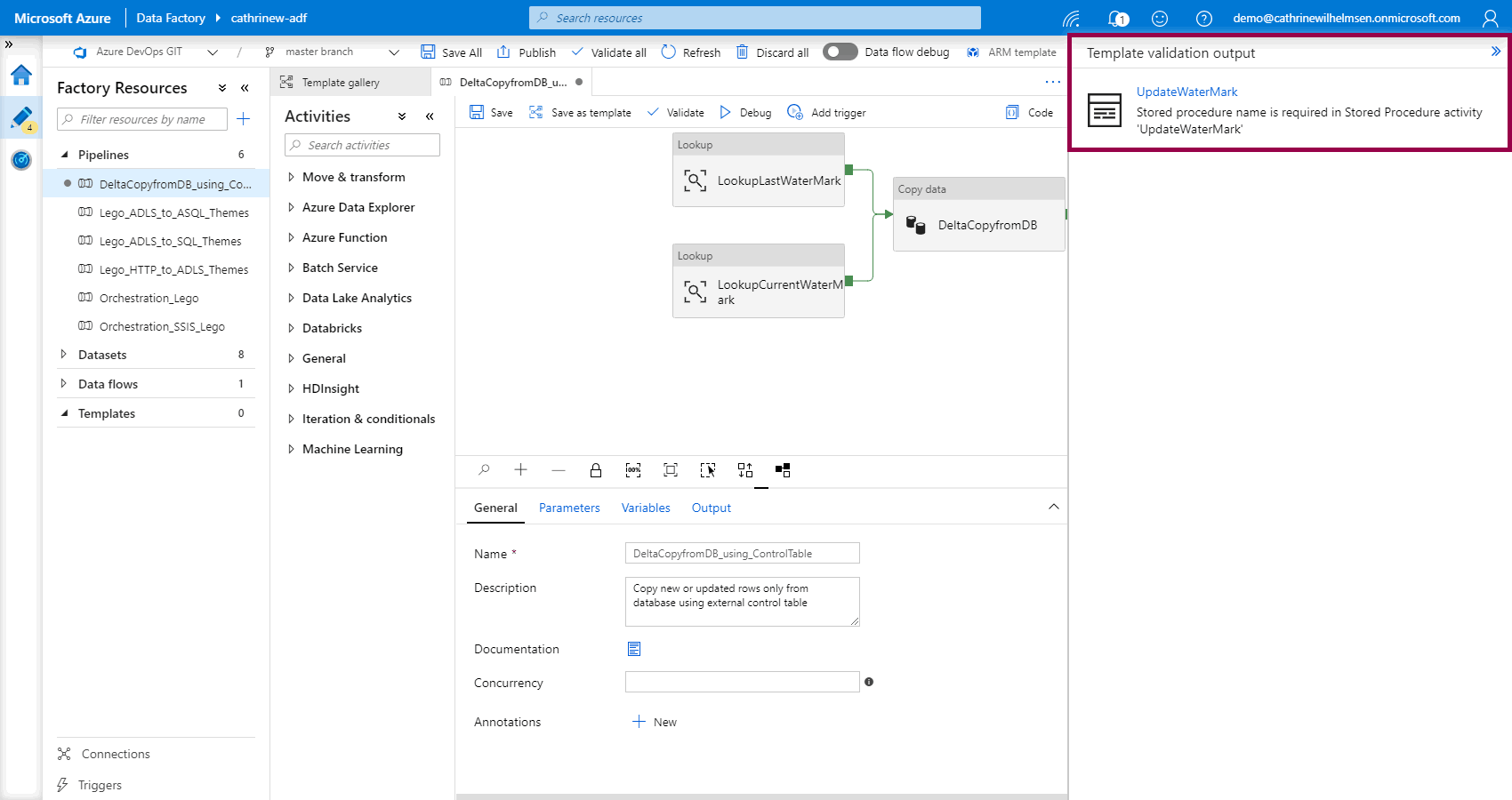

…and call out the things you need to configure:

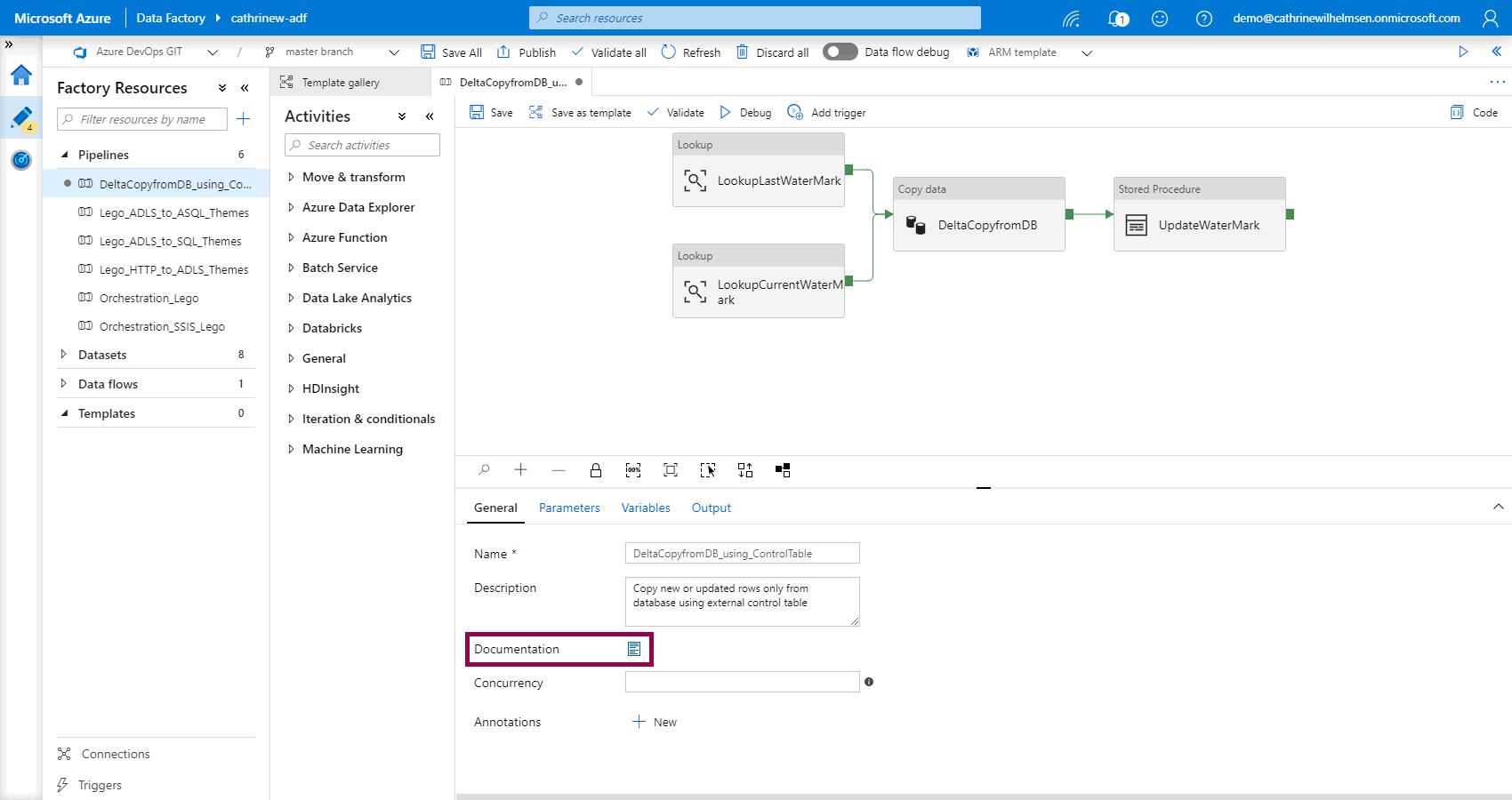

If you’re not sure how to configure the pipeline, you can click the documentation link:

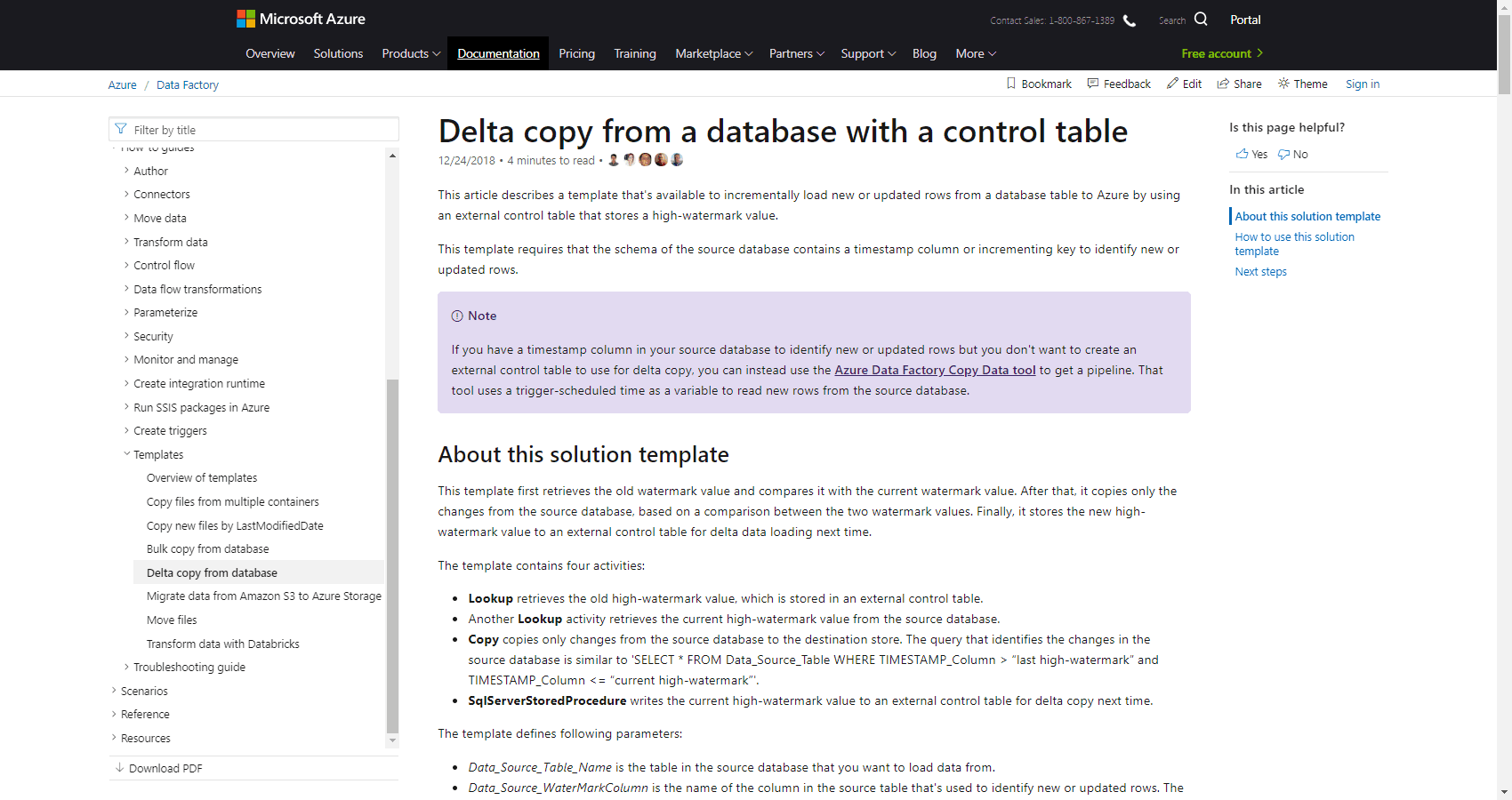

This will take you to the template documentation:

Easy, huh? 😊

Creating Custom Templates

You can also create custom templates and share them with your team - or share them externally with others. Custom templates are saved in your code repository and will show up in the template gallery for you and your team. If you want to share them externally, you can easily export them, so others can import them in their Azure Data Factory.

Let’s take a look!

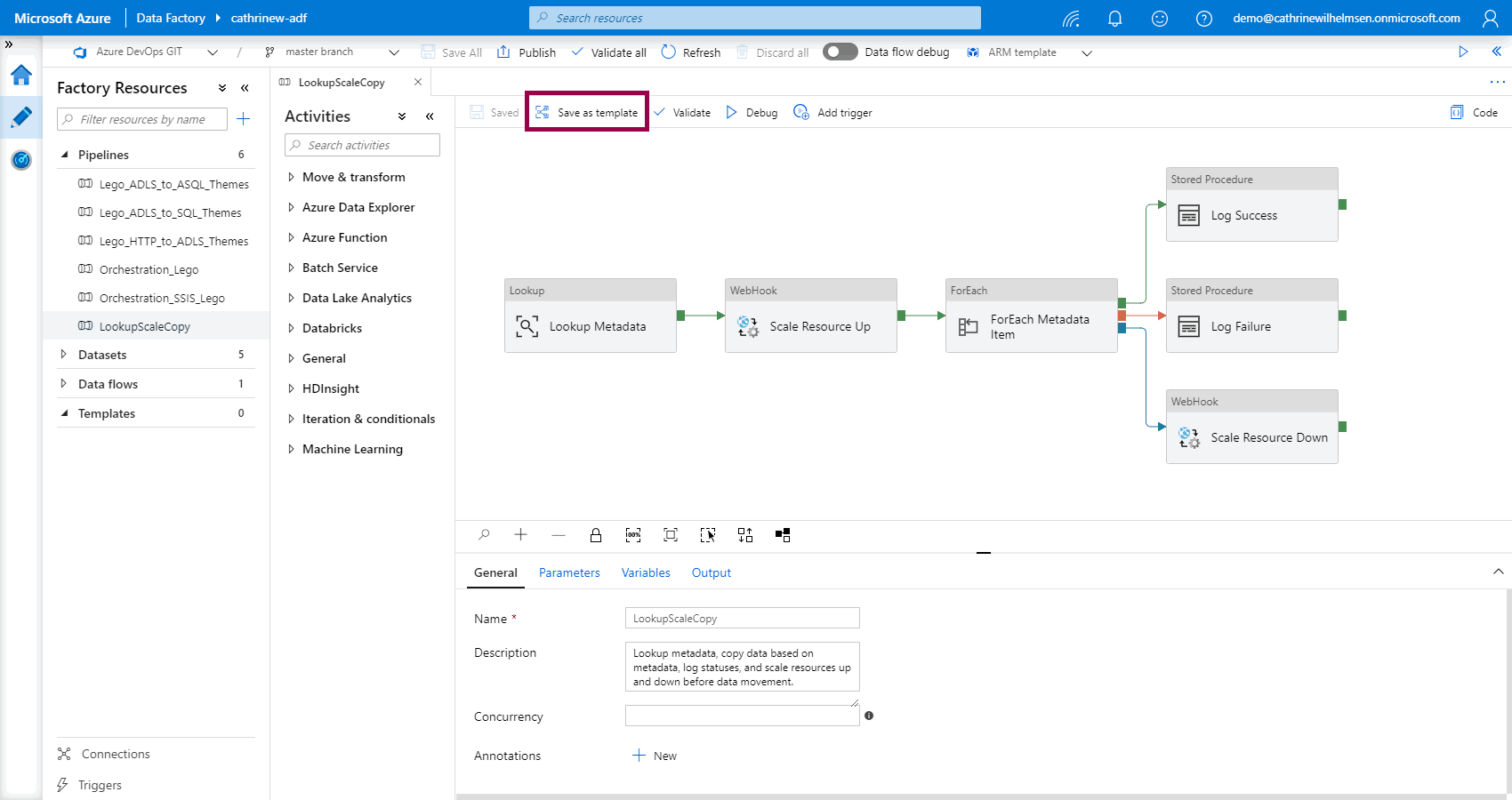

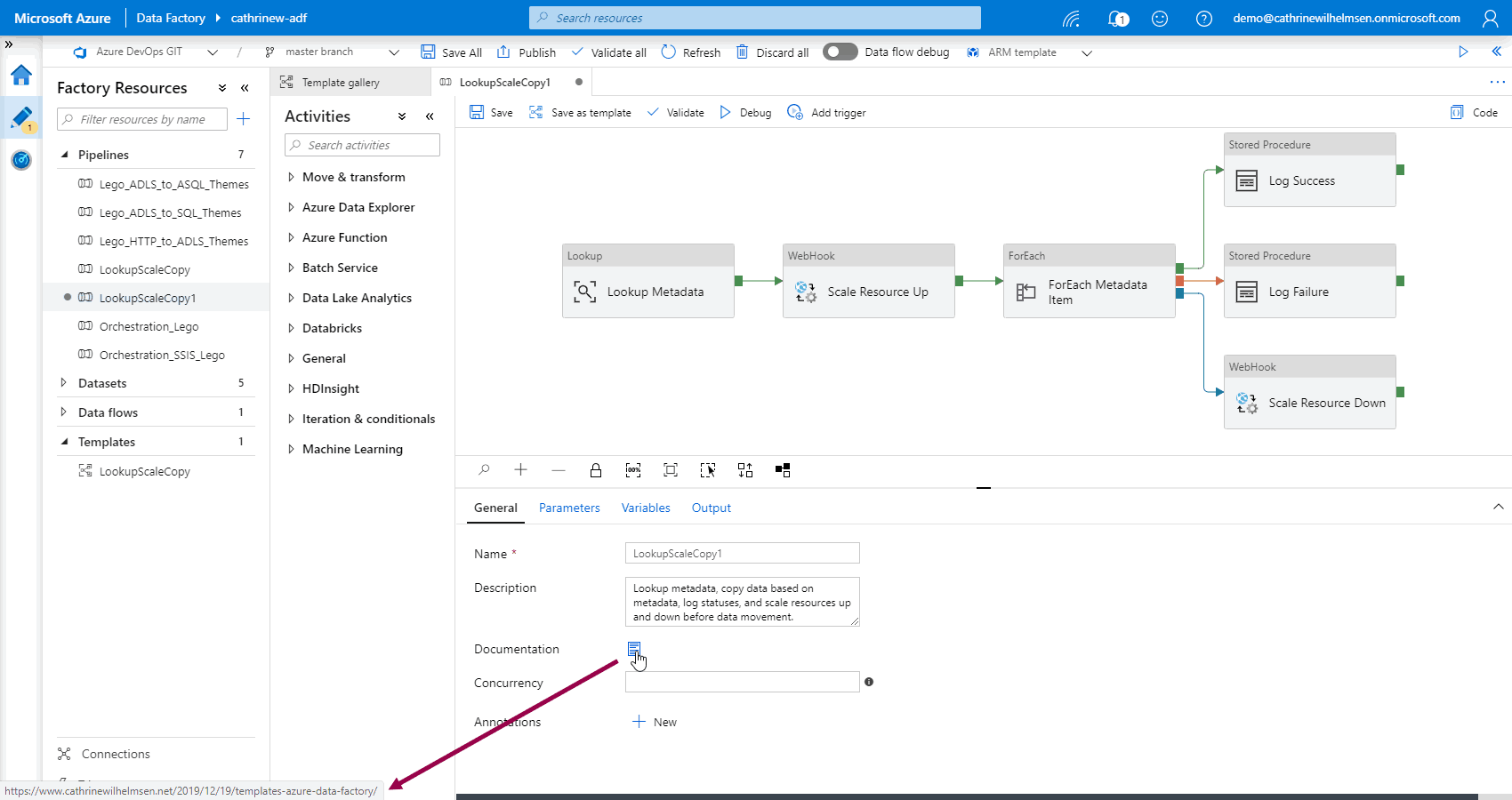

Say that you have a pipeline that looks up some metadata, copies some data based on that metadata, logs the status of the pipeline, and scales some resources up and down. Something liiike… this! You can save that as a pipeline by clicking save as template:

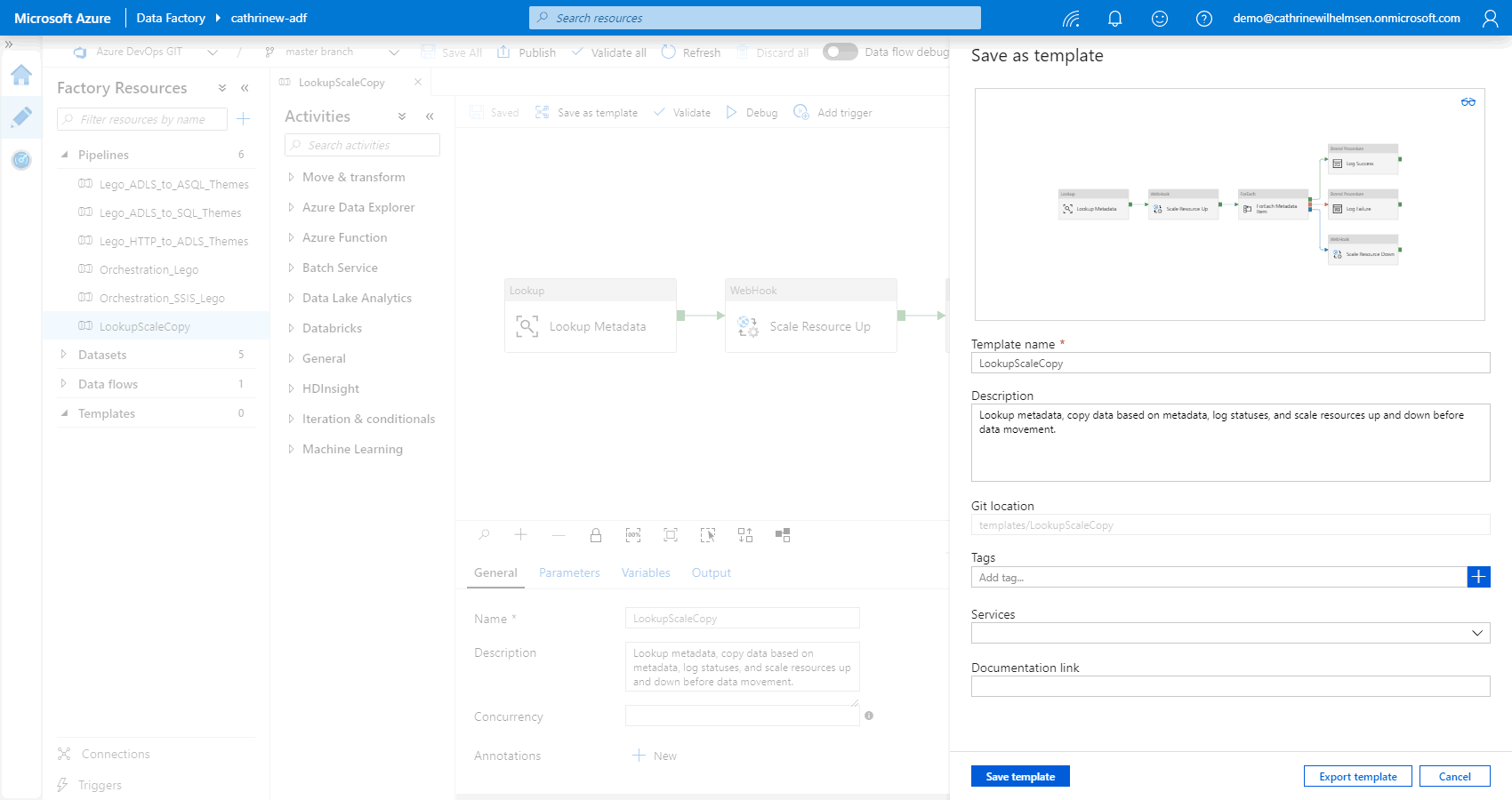

This will open the save as template pane, where you see an illustration of your pipeline. Here, you specify the template name, description, tags, services, and documentation link:

Notice that you can create your own tags, but that you have to select from a pre-defined list of services:

You may want to create a naming convention or a set of standard tags to stick to, so it doesn’t get out of control 😅

From here, you can either save or export the template.

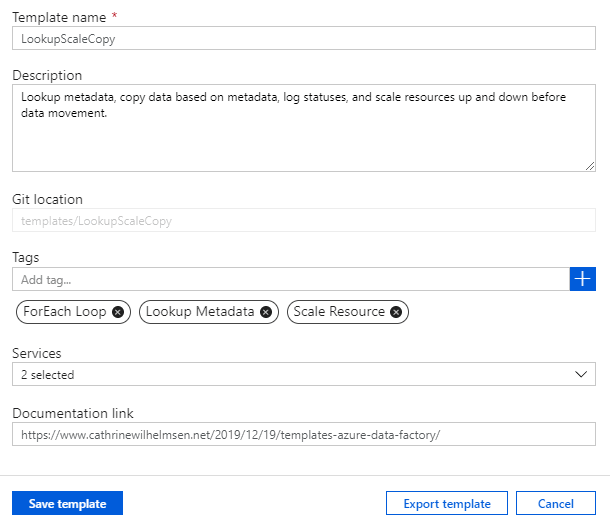

But before we do that… do you ever need screenshots of your pipelines? You don’t actually need to take a screenshot. Just save the preview image as an SVG:

SVG files, scalable vector graphics, can be scaled up and down without loss in quality. Tadaaa! Now you have an illustration of your pipeline, instead of a screenshot:

Cool cool cool. I love SVGs 🤓

Saving Custom Templates

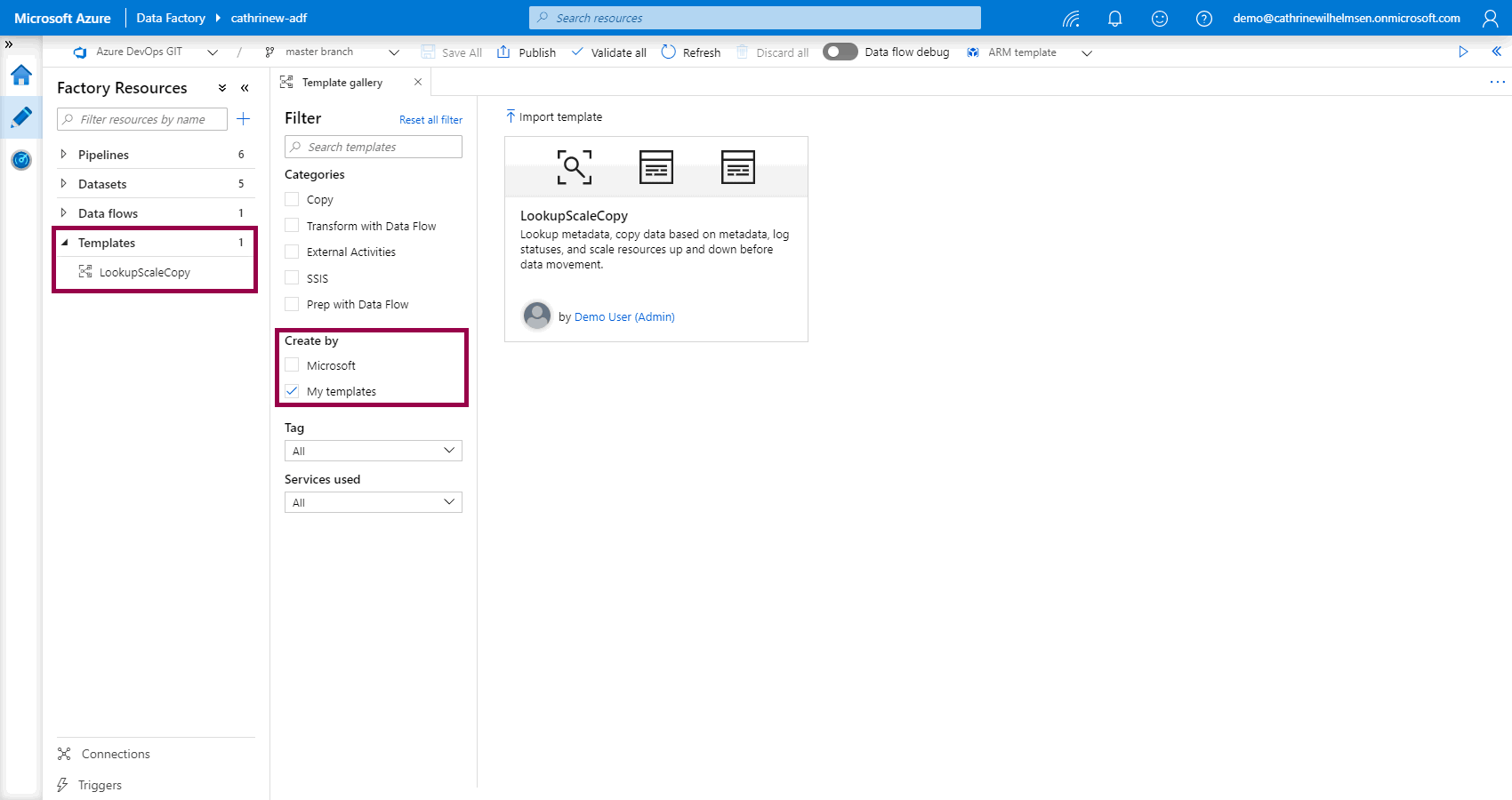

When you save a template, it will show up under factory resources, as well as in the template gallery:

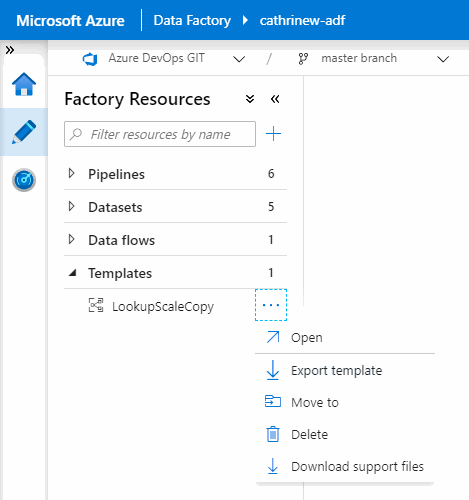

If you click on it, you can choose to edit, use, export, or delete the template:

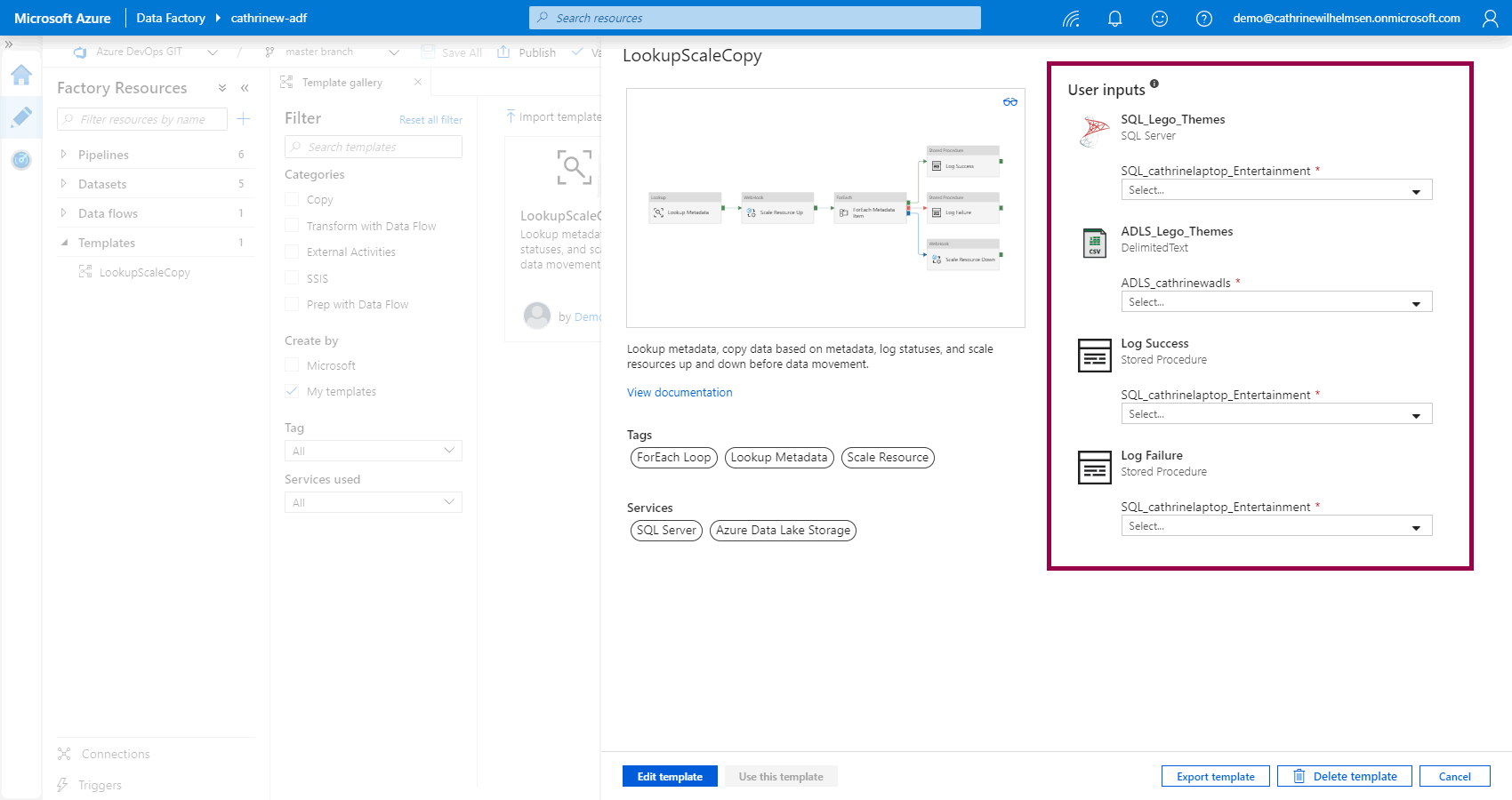

Notice that the user inputs are using the names of our original datasets and linked services. You have to decide whether this is informative and useful, or if you need to rename them to something more descriptive and less specific:

When you use a template, notice how the documentation link is now pointing to a custom URL. This is a great way of linking your Azure Data Factory to your project documentation:

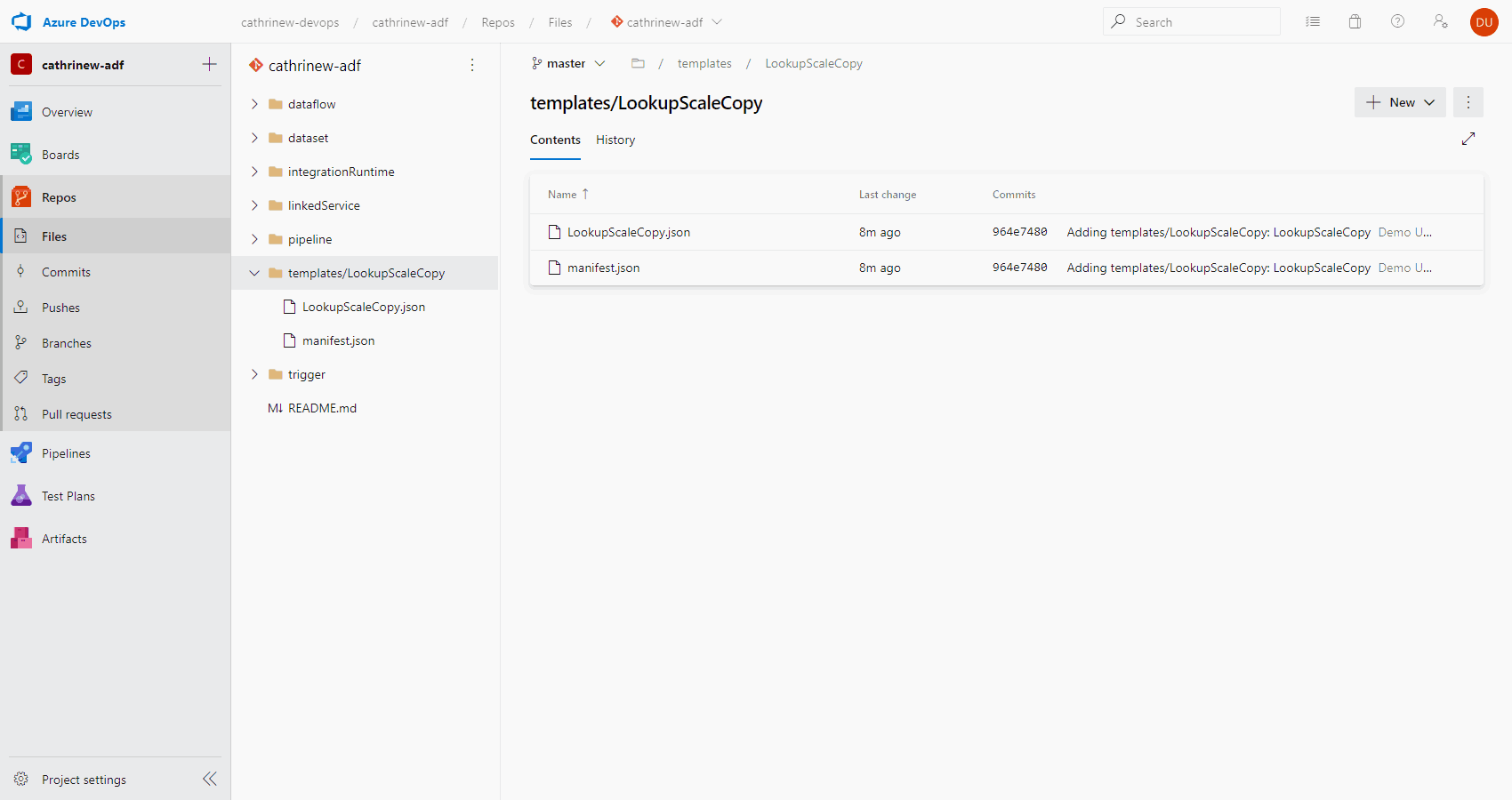

Custom templates are saved in your code repository:

Exporting and Importing Custom Templates

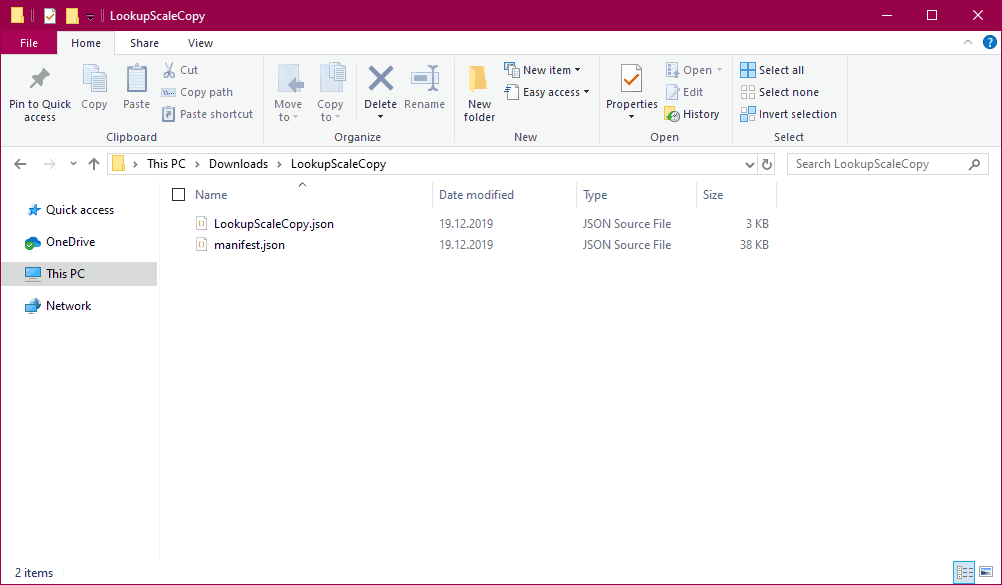

When you export a template, you will download a ZIP file. The contents of the ZIP file is the same as you will find in your code repository:

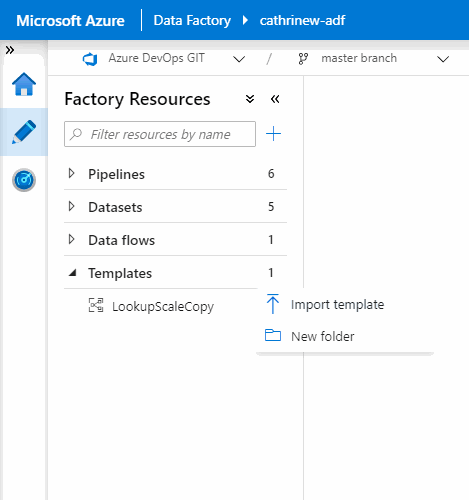

You can import and export templates from the templates menu:

Now you never have to start building a pipeline from scratch again 🥳

Summary

In this post, we looked at pipeline templates, the template gallery, and how you can create your own templates. They are an easy and convenient way to share design patterns and best practices between team members, and across Azure Data Factories.

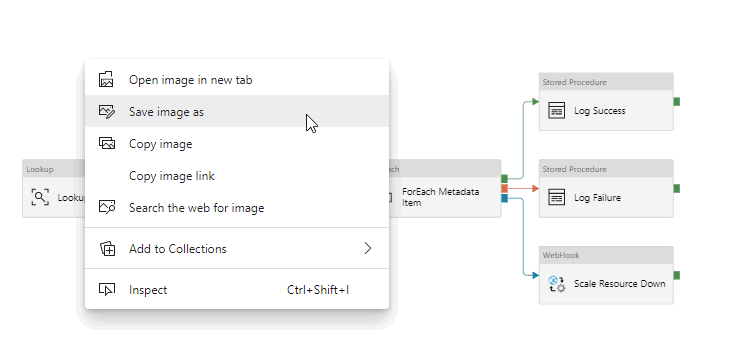

But this pipeline… 👇🏻 This pipeline uses a couple of fun activities… Notice the Lookup and ForEach? Yeah. They’re awesome. They can be used to create dynamic pipelines, woohoo!

In the next few posts, we will go through how to build dynamic pipelines in Azure Data Factory. We will cover things like parameters, variables, loops, and lookups. Fun!

About the Author

Cathrine Wilhelmsen is a Microsoft Data Platform MVP, international speaker, author, blogger, organizer, and chronic volunteer. She loves data and coding, as well as teaching and sharing knowledge - oh, and sci-fi, gaming, coffee and chocolate 🤓

Cathrine Wilhelmsen is a Microsoft Data Platform MVP, international speaker, author, blogger, organizer, and chronic volunteer. She loves data and coding, as well as teaching and sharing knowledge - oh, and sci-fi, gaming, coffee and chocolate 🤓