Linked Services in Azure Data Factory

In the previous post, we looked at datasets and their properties. In this post, we will look at linked services in more detail. How do you configure them? What are the authentication options for Azure services? And how do you securely store your credentials?

Let’s start by creating a linked service to an Azure SQL Database. Yep, that linked service you saw screenshots of in the previous post. Mhm, the one I sneakily created already so I could explain using datasets as a bridge to linked services. That one 😅

Pssst! Linked Services have been moved into the management page. I'm working on updating the descriptions and screenshots, thank you for your understanding and patience 😊

Creating Linked Services

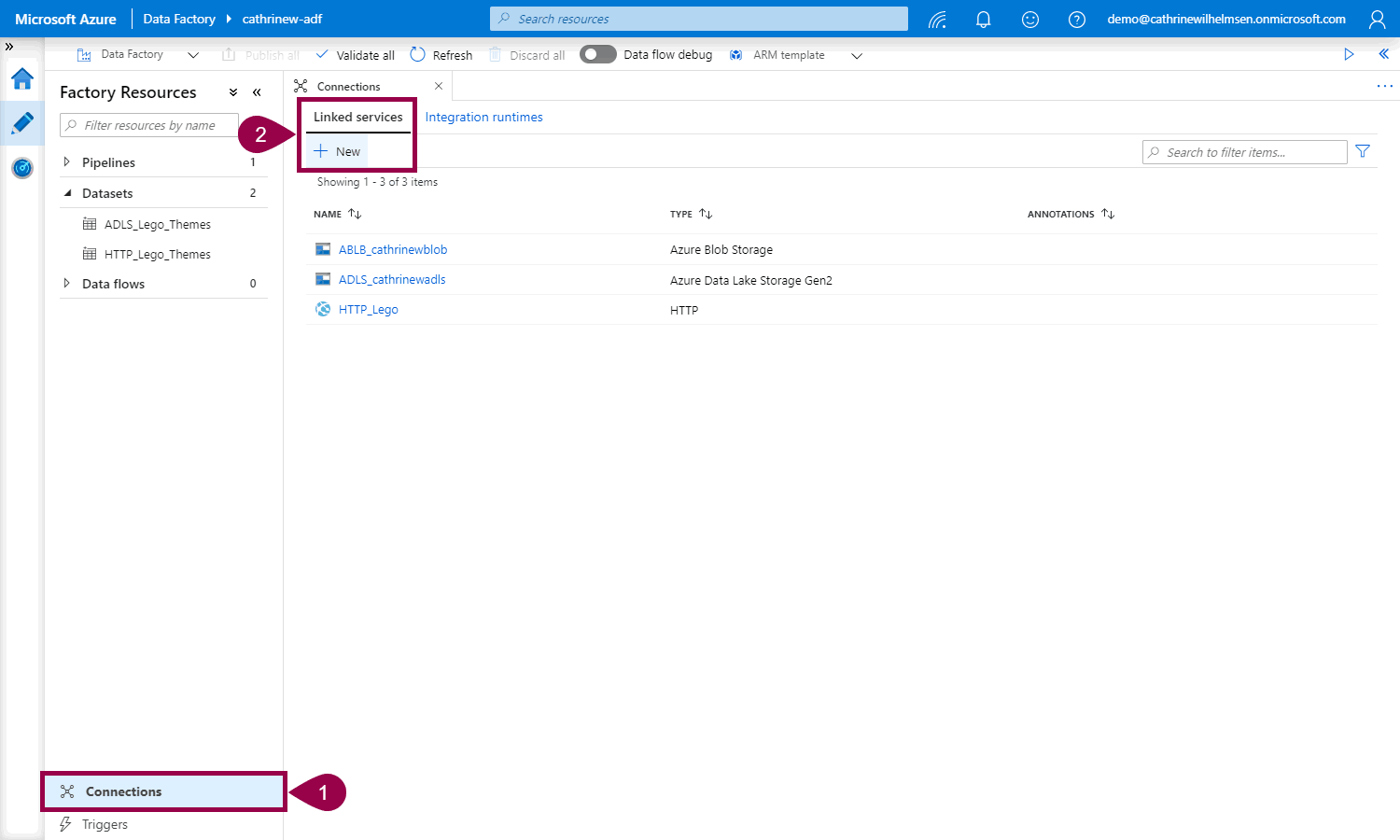

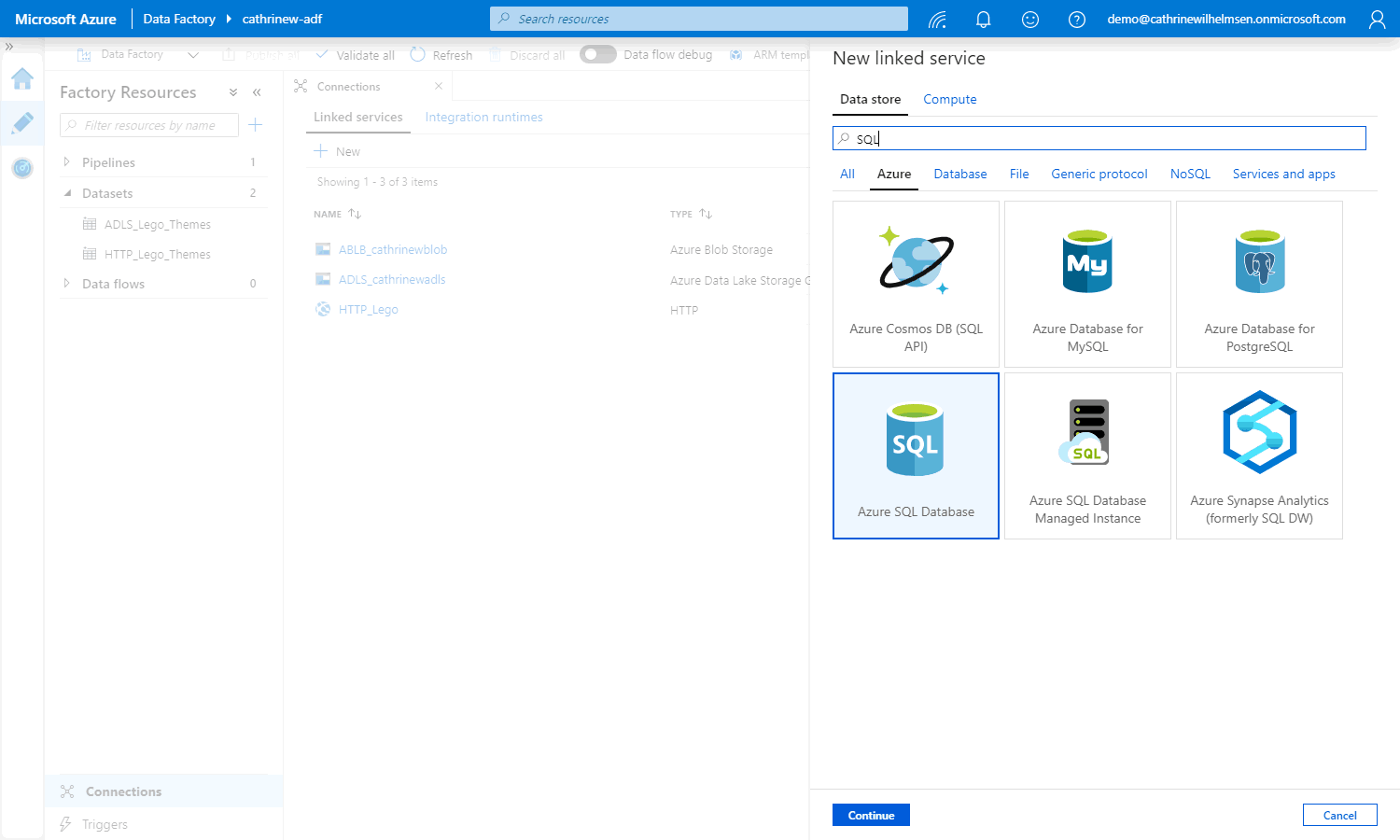

First, click Connections. Then, on the linked services tab, click New:

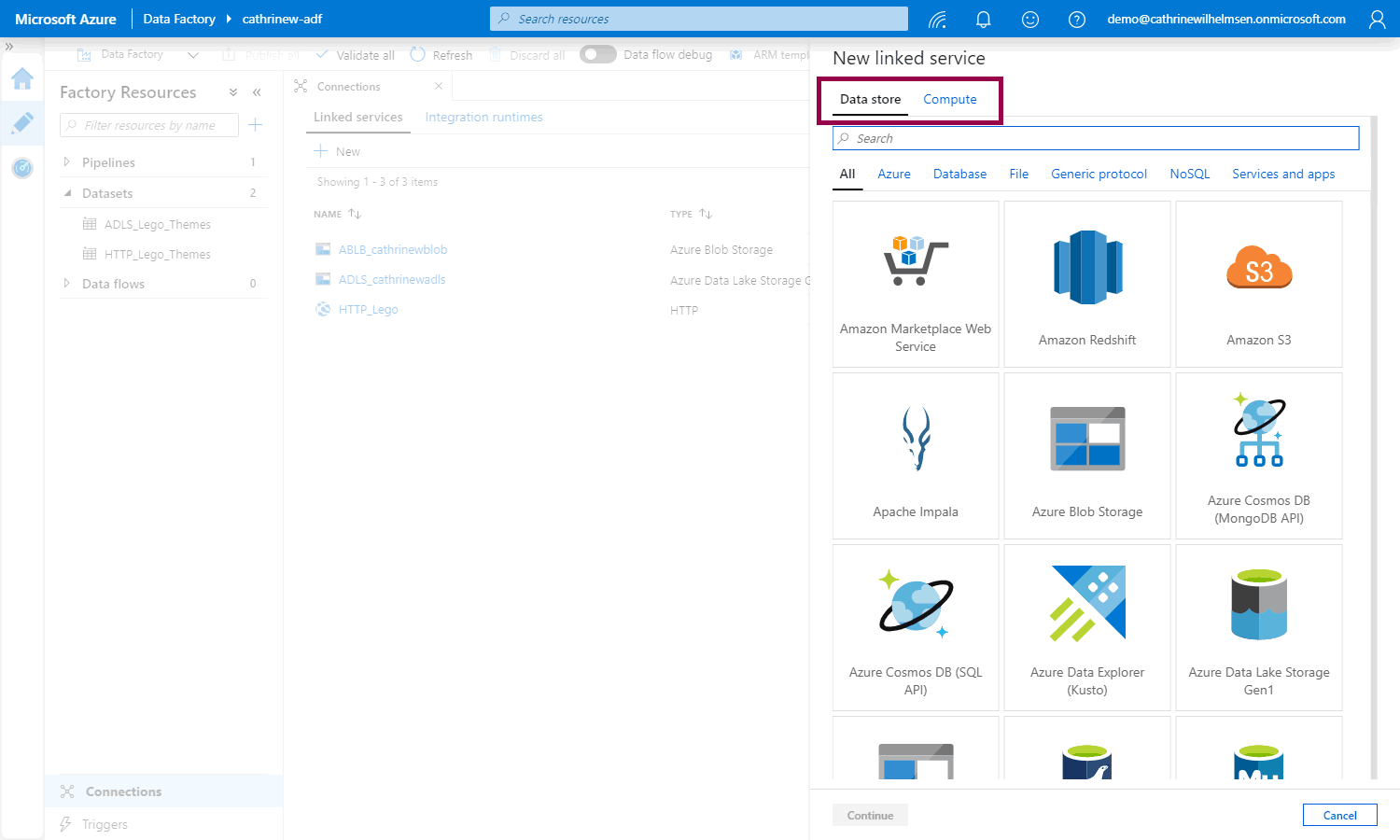

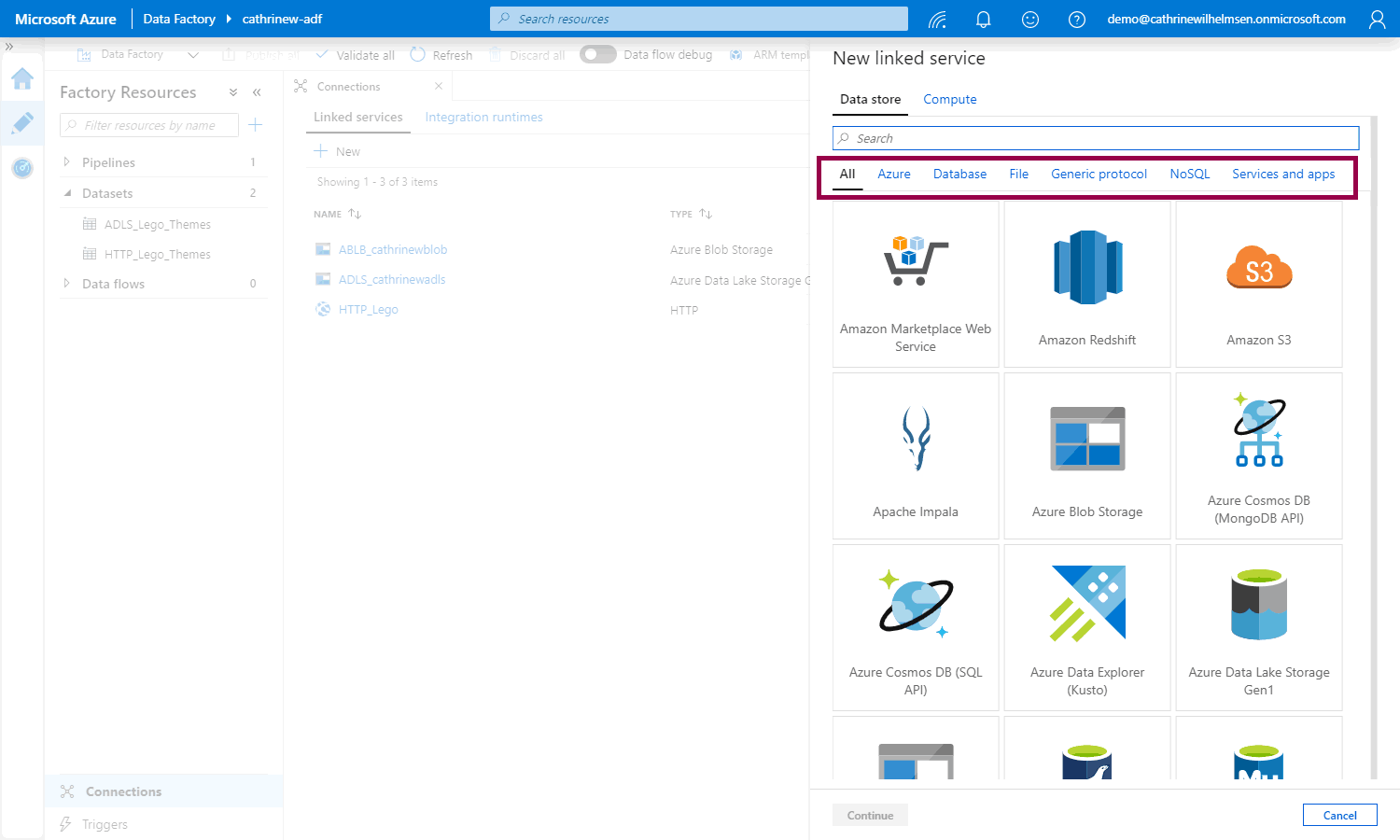

The New Linked Service pane will open. The Data Store tab shows all the linked services you can get data from or read data to:

Since there are so many data store linked services, they have been divided into sub-categories for easier navigation:

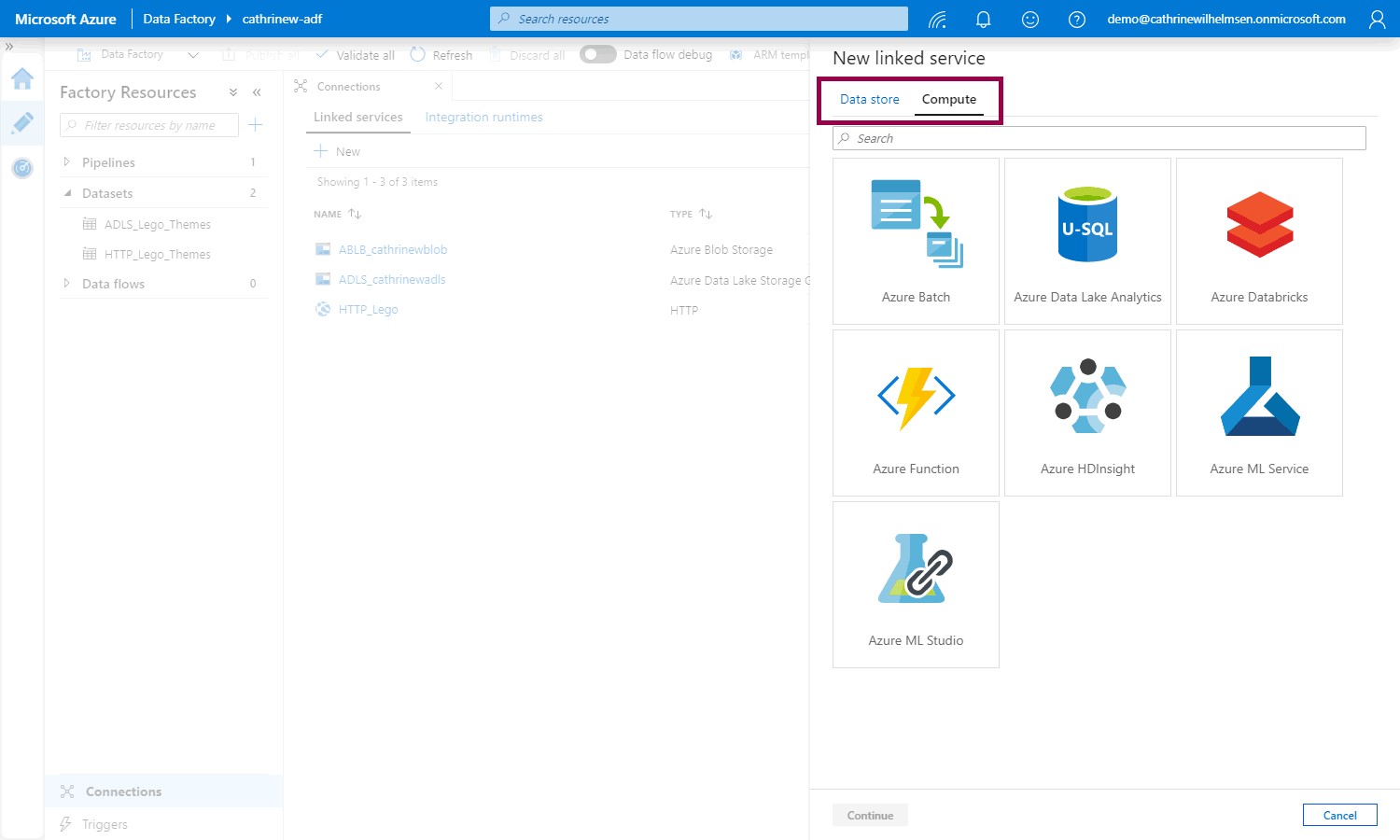

The Compute tab shows all third-party services that you can use outside Azure Data Factory:

And if you’re wondering how many linked services there are in total…

…there are many 🤩

So! Since we can’t cover all of them, let’s look at one of the most common linked services used in Azure Data Factory. Find and click on Azure SQL Database:

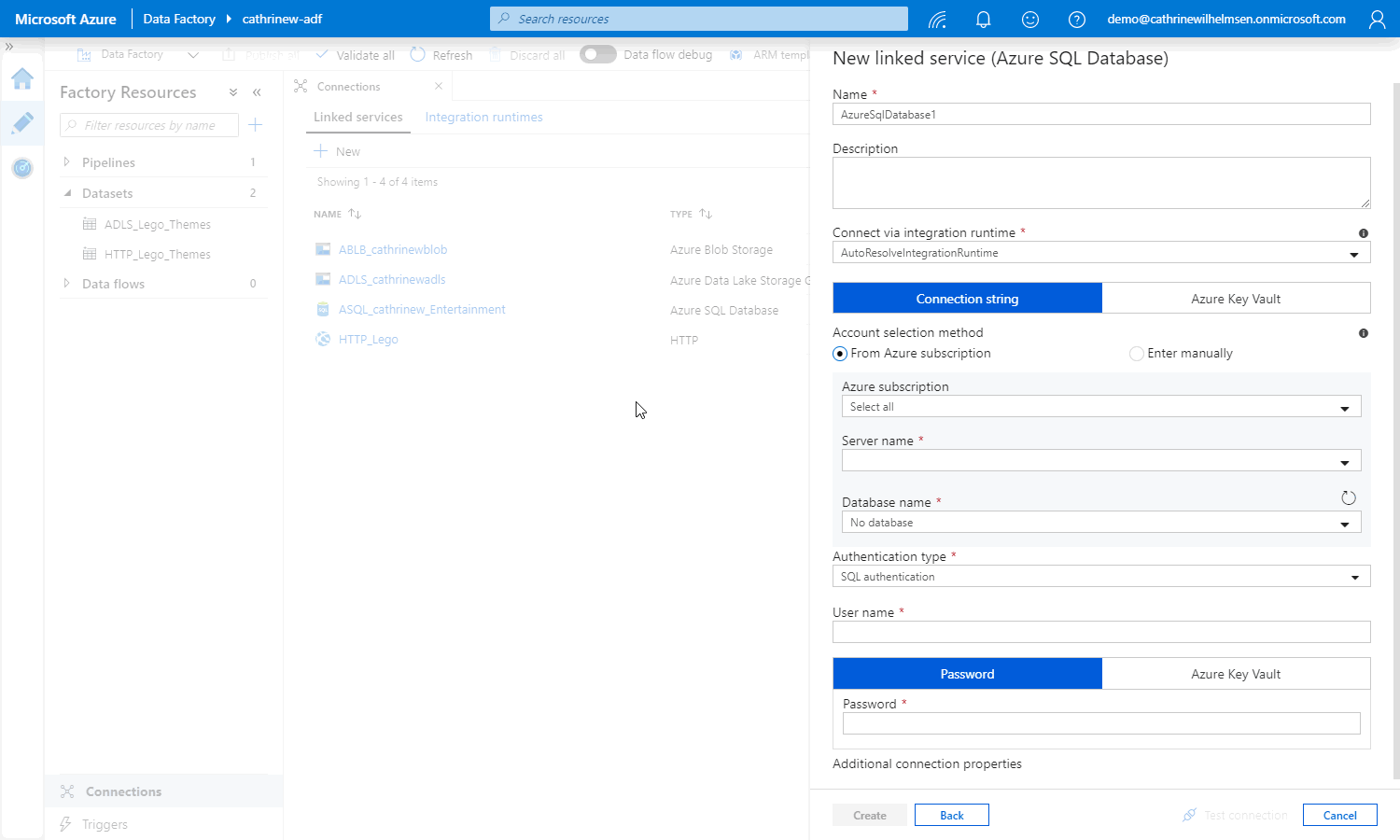

This will open up the properties pane:

Just like with the copy data activity and datasets, these properties depend entirely on the type of linked service.

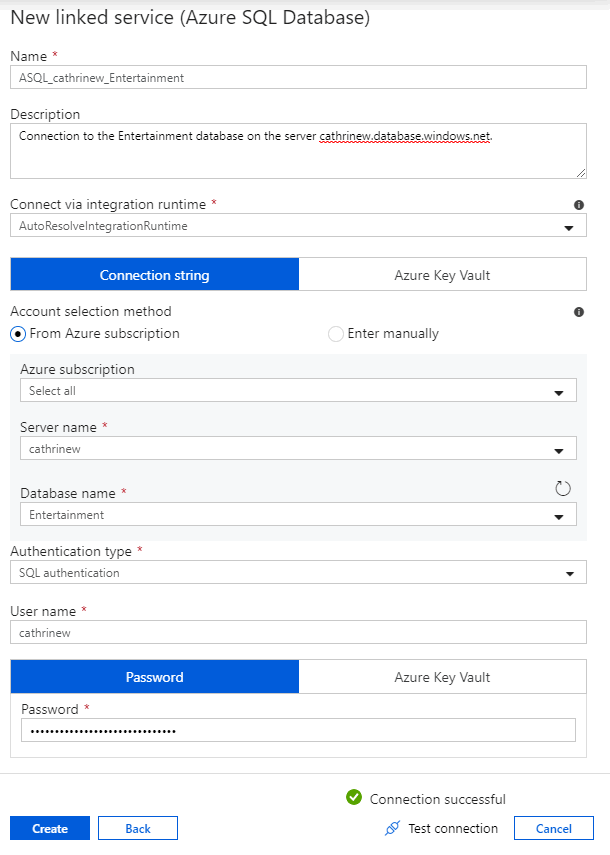

Let’s go through the Azure SQL Database properties step by step.

General Properties

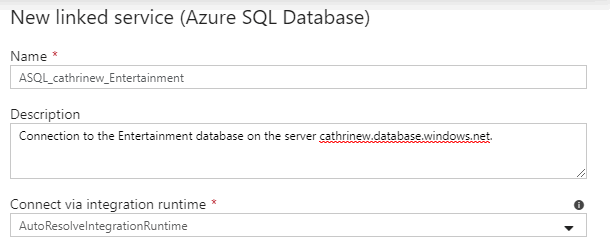

As always, provide a descriptive name and a description that makes sense to you:

Connection String

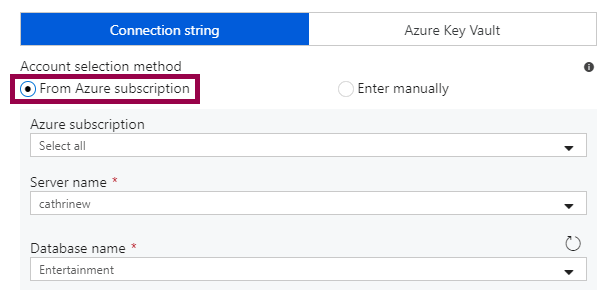

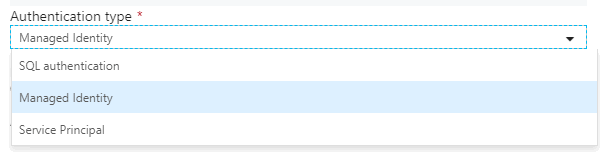

If your user has access to the subscription of the Azure SQL Database, you can use the dropdowns. Select the subscription, server, and database:

If your user does not have access to the subscription of the Azure SQL Database, you can manually enter the server and database. In this case, you need to use the fully qualified domain name:

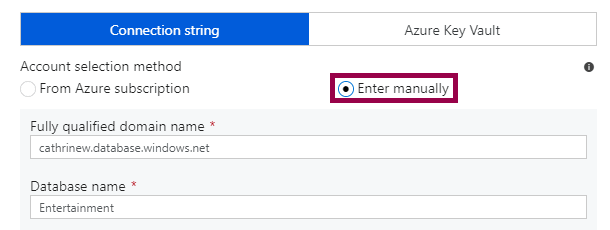

Instead of specifying the connection string directly, you can reference a secret stored in Azure Key Vault:

Azure Key Vault is a service for storing and managing secrets (like connection strings, passwords, and keys) in one central location. By storing secrets in Azure Key Vault, you don’t have to expose any connection details inside Azure Data Factory. You can connect to “the application database” without directly seeing the server, database name, or credentials used.

Authentication Type

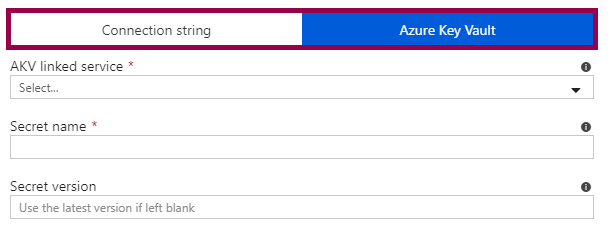

When connecting to Azure SQL Databases using a specified connection string, you can choose one of three authentication types:

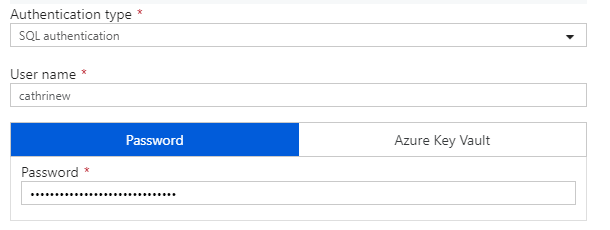

SQL Authentication

SQL authentication is the default option. In this case, you specify a username and password to connect to the database:

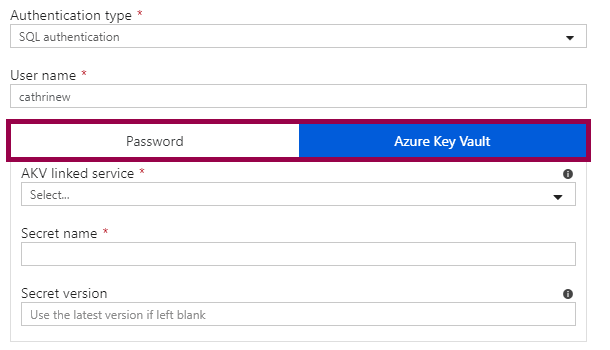

You can also store the password in Azure Key Vault:

The username can’t be referenced from Azure Key Vault, however. You either have to reference the entire connection string, or just the password.

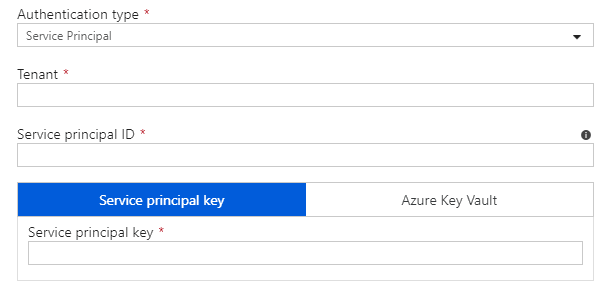

Service Principal

A Service Principal is kind of like a user, but for an Azure service instead of for an actual person. In Azure Active Directory (AAD), you create this “user” for your Azure Data Factory. Then, you grant the Azure Data Factory access to your database. Read more and see how to do this in the official documentation. In the linked service, you then specify the tenant, service principal ID, and service principal key (either directly or using Azure Key Vault):

My advice? Don’t use Service Principals, unless someone requires it for some reason. It used to be the only way to connect to an Azure SQL Database without a username or password. Now, you also have managed identities.

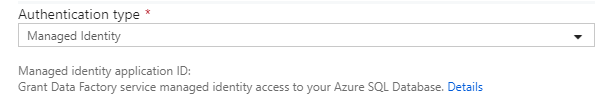

Managed Identity

A Managed Identity is a type of service principal, but it is entirely managed by Azure. When you create an Azure Data Factory, Azure automatically creates the managed identity for it. You don’t have to create or maintain it, you only have to grant it access to your database. Read more and see how to do this in the official documentation. In the linked service, you don’t have to specify anything else:

My advice? Use Managed Identities whenever possible. This is the preferred authentication method for Azure services. It does require a few more steps to set up, but then you don’t have to worry about any usernames or passwords.

If you want to dig into the details of managed identities for Azure resources, have fun reading the official documentation 😊

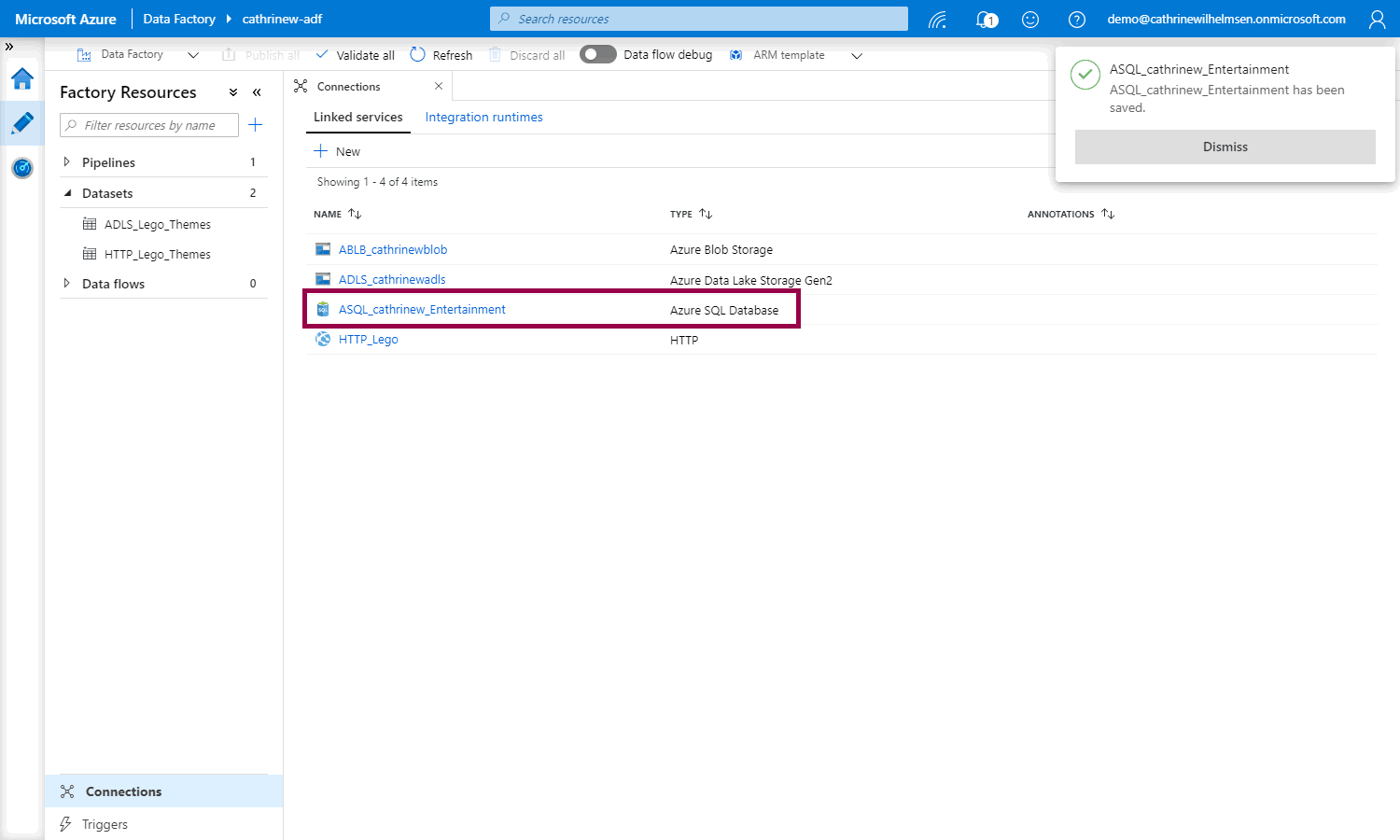

Test and Create the Linked Service

Once you have specified the connection string and chosen the authentication type, click Test Connection, then Create:

If you specify a password, instead of using an Azure Key Vault or a Managed Identity, the linked service is immediately published to the Azure Data Factory service:

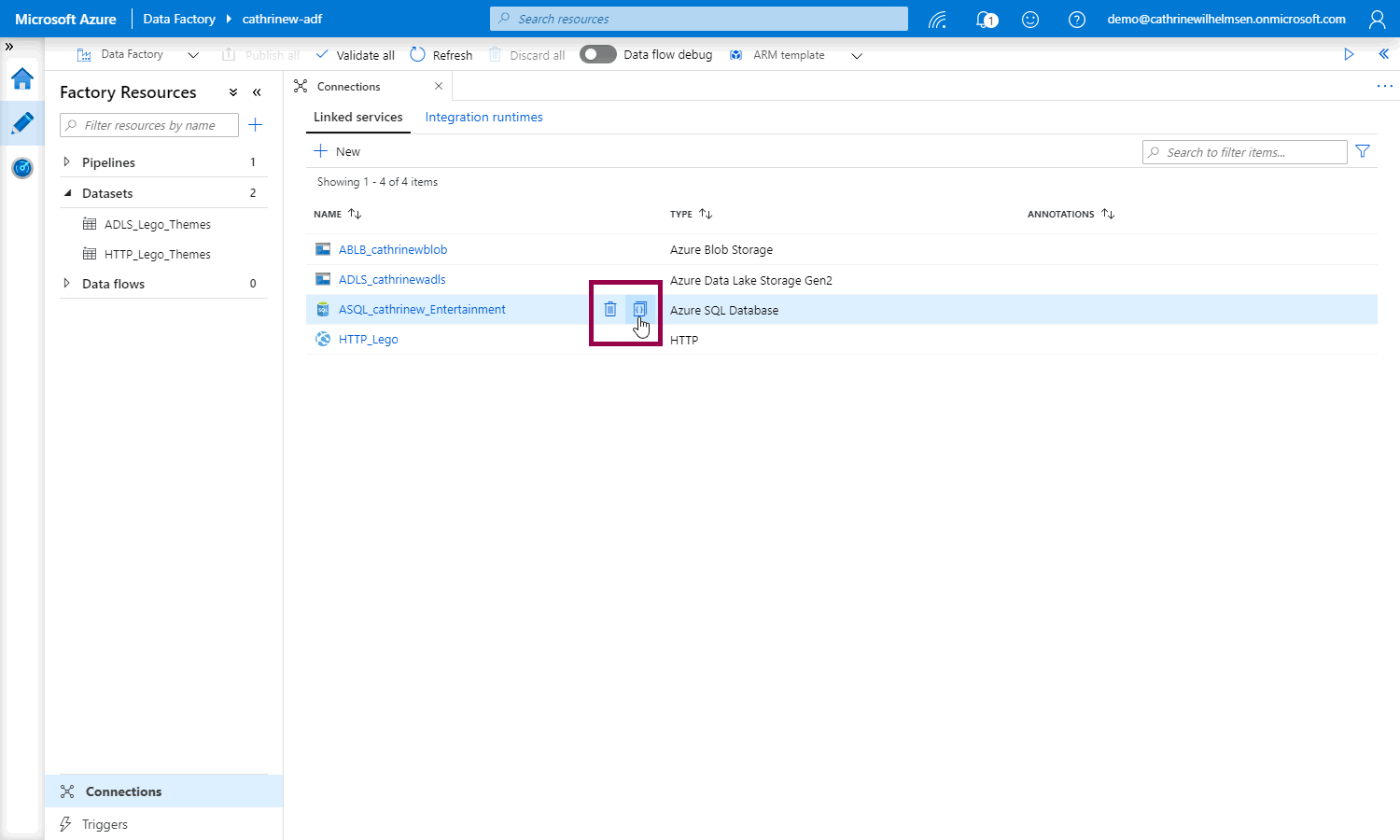

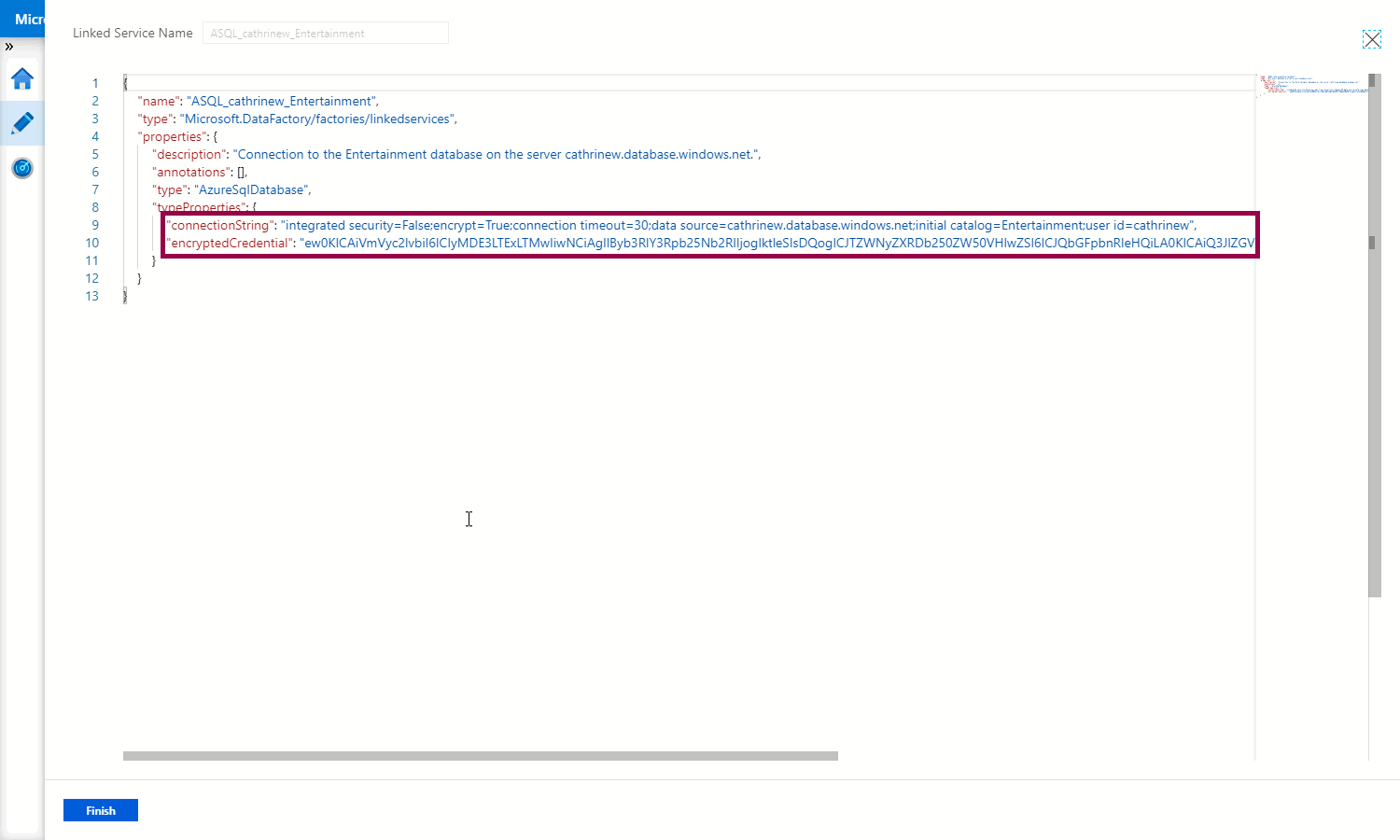

The linked service is immediately published to ensure that the password is encrypted and securely stored. This means that there is no way for you to get the password back out of the linked service. You can check this by hovering over the linked service and clicking the code button:

In the JSON code for the linked service, you can see that the connection string and user name is stored in plain text, while the password has been encrypted:

Summary

In this post, we looked at linked services in more detail. We walked through the properties of an Azure SQL Database connection, the different authentication methods, and explained how Azure Key Vault and Managed Identities can be used.

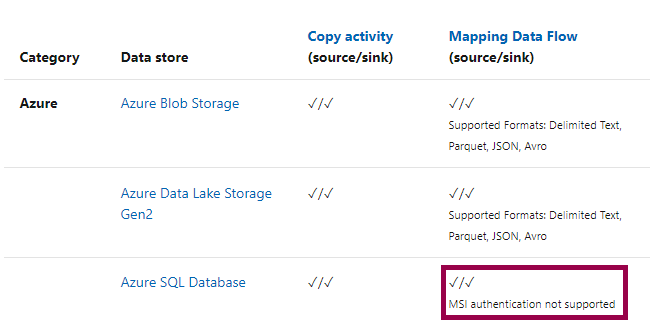

Since we can’t cover all the linked services in detail, I recommend bookmarking and referencing both the data store connector overview and compute services overview while developing your own solution.

At this point, you may be wondering “hey Cathrine, why did you tell me to use a managed identity, but then you created a linked service using a username and password?”

Excellent question! 😅

And the short answer is… I kind of have to for the next post in this series. As of right now, mapping data flows don’t support connecting to Azure SQL Database using a Managed Identity:

And in the next post, we will take a look at… you guessed it… data flows!

About the Author

Cathrine Wilhelmsen is a Microsoft Data Platform MVP, international speaker, author, blogger, organizer, and chronic volunteer. She loves data and coding, as well as teaching and sharing knowledge - oh, and sci-fi, gaming, coffee and chocolate 🤓

Cathrine Wilhelmsen is a Microsoft Data Platform MVP, international speaker, author, blogger, organizer, and chronic volunteer. She loves data and coding, as well as teaching and sharing knowledge - oh, and sci-fi, gaming, coffee and chocolate 🤓