Debugging Pipelines in Azure Data Factory

In the previous post, we looked at orchestrating pipelines using branching, chaining, and the execute pipeline activity. In this post, we will look at debugging pipelines. How do we test our solutions?

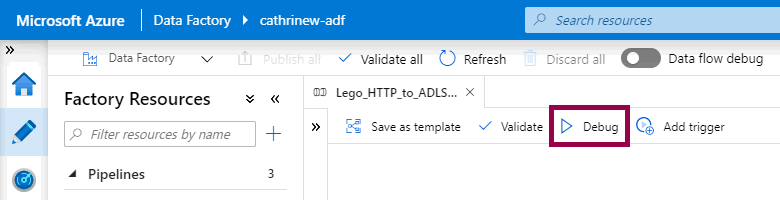

You debug a pipeline by clicking the debug button:

Tadaaa! Blog post done? 😂

I joke, I joke, I joke. Debugging pipelines is a one-click operation, but there are a few more things to be aware of. In the rest of this post, we will look at what happens when you debug a pipeline, how to see the debugging output, and how to set breakpoints.

Pssst! The debugging experience has had a huge makeover since I first wrote this post. I'm working on updating the descriptions and screenshots, thank you for your understanding and patience 😊

Debugging Pipelines

Let’s start with the most important thing:

When you debug a pipeline, you execute the pipeline. If you have a copy data activity, the data will be copied. If you truncate tables or delete files, you will truncate the tables and delete the files.

This means that you need to make sure that you are either:

- Debugging in a separate development or test environment

- Using test connections, folders, files, tables, etc.

You may also want to limit your queries and datasets, unless you are testing your pipeline performance.

All clear? You now definitely know not to debug anything in production unless you’re really really really sure it doesn’t break anything? Yes? Excellent! 😁

So!

How do I debug a pipeline?

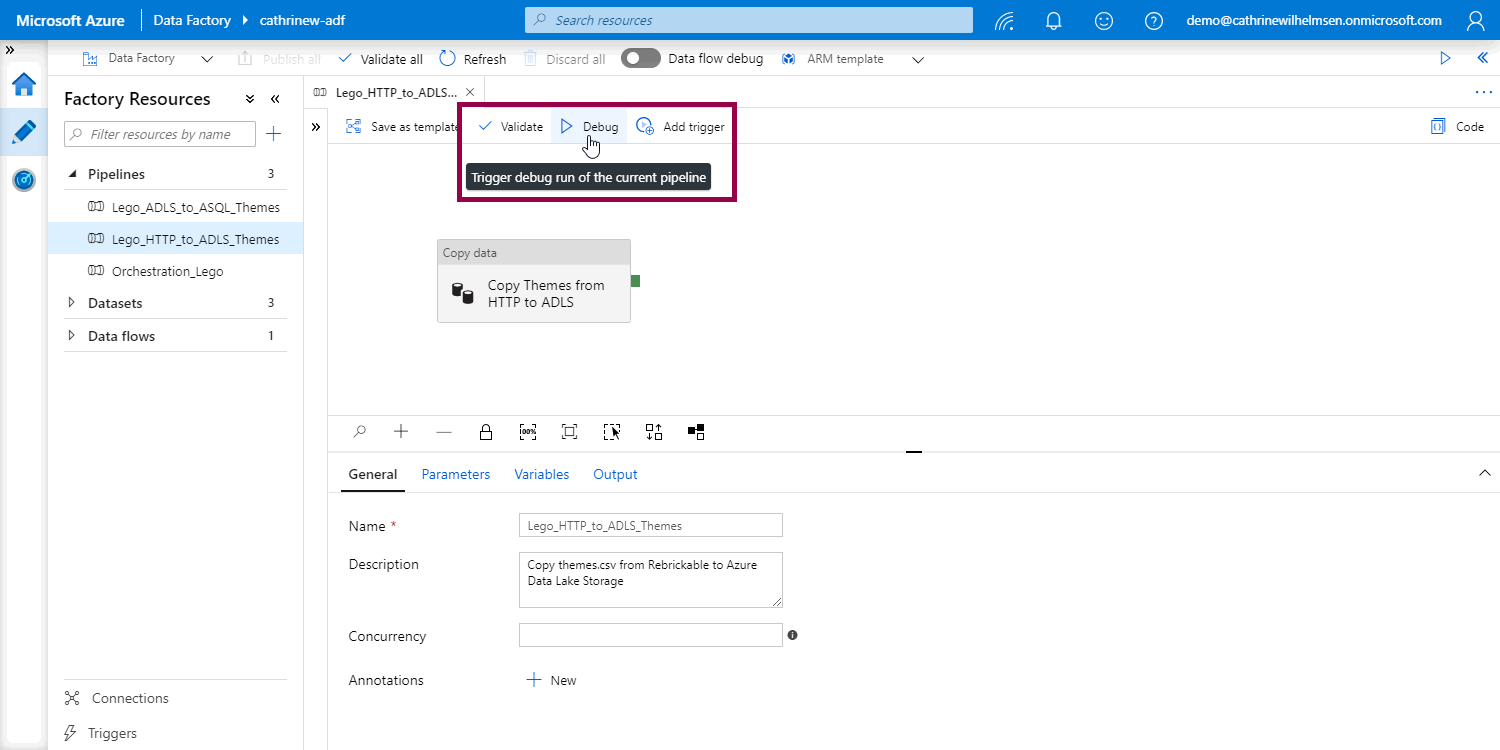

Again, you debug a pipeline by clicking the debug button:

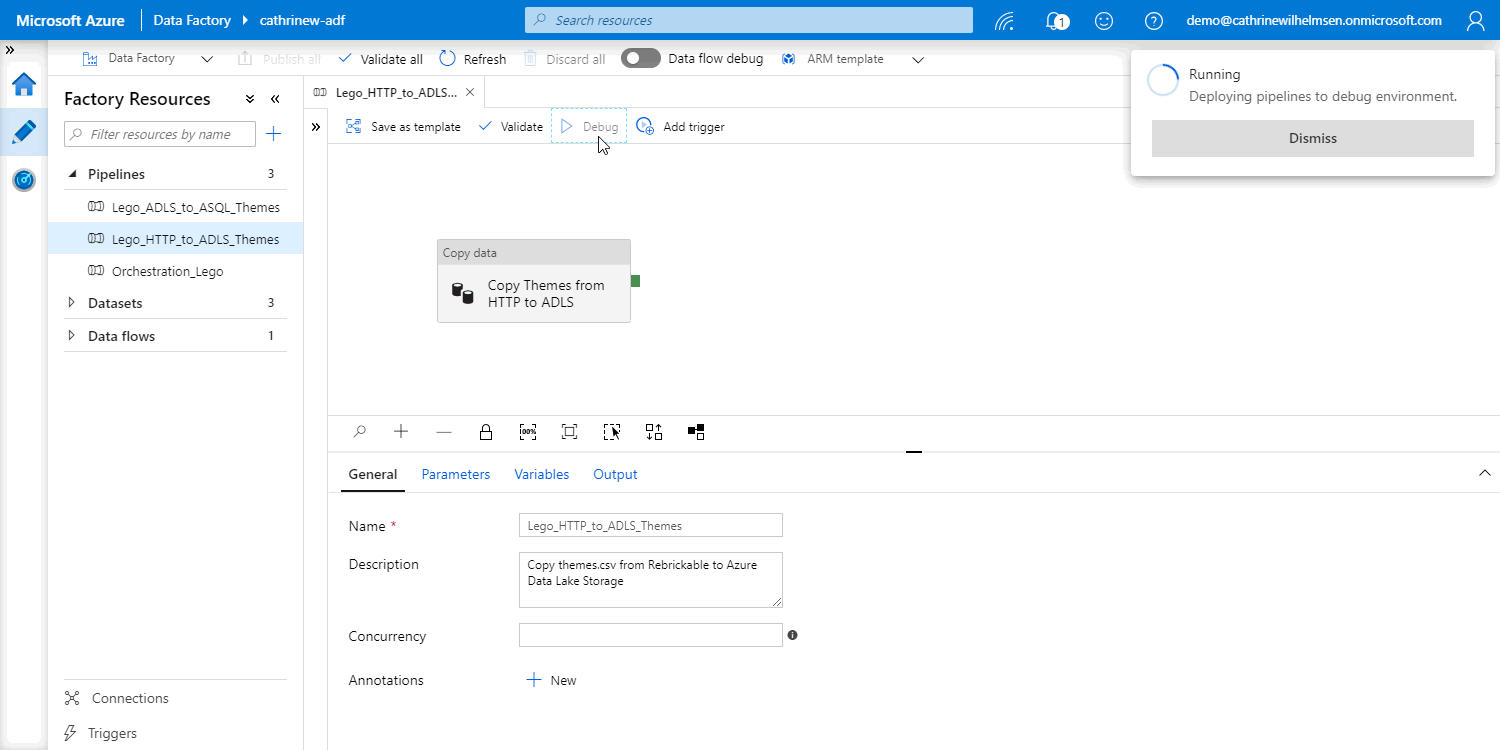

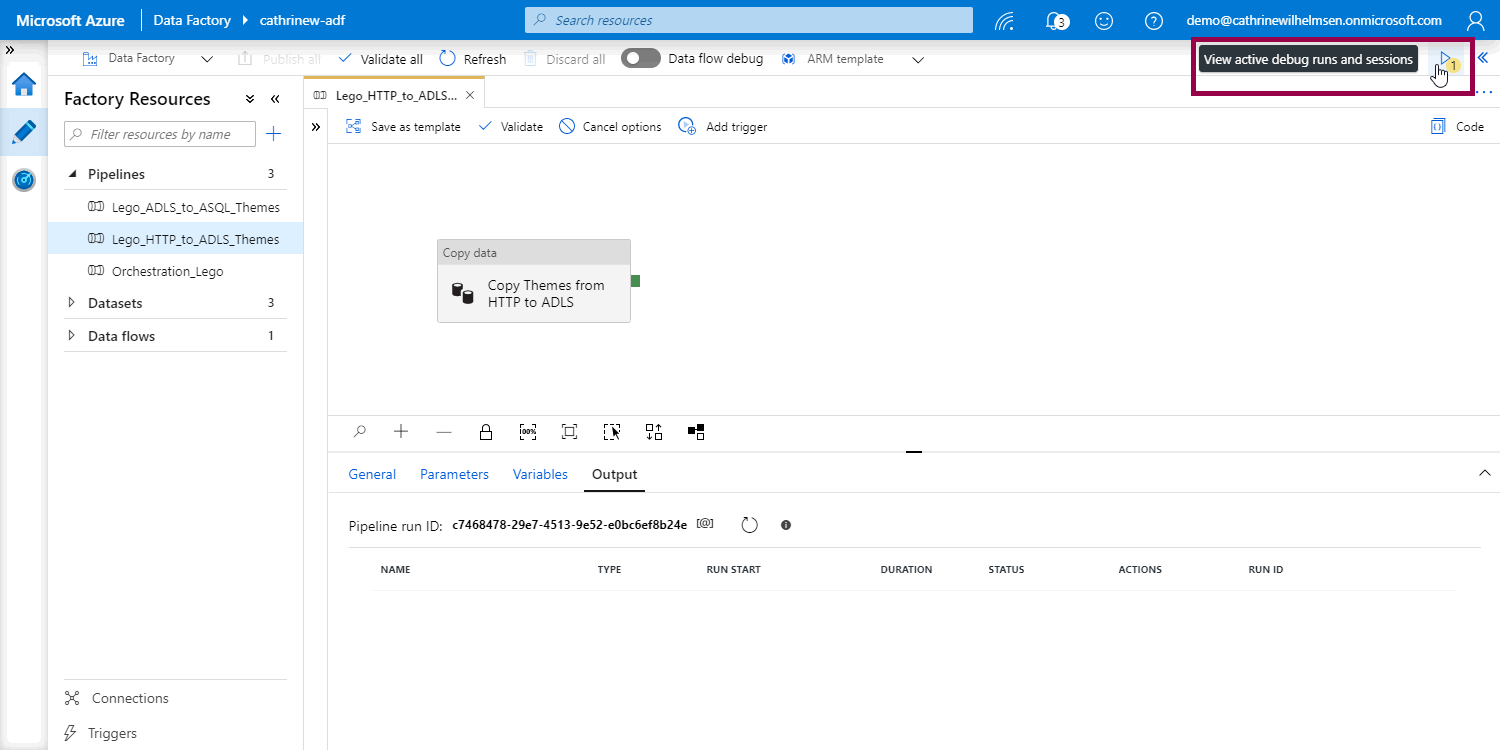

This starts the debug process. First, Azure Data Factory deploys the pipeline to the debug environment:

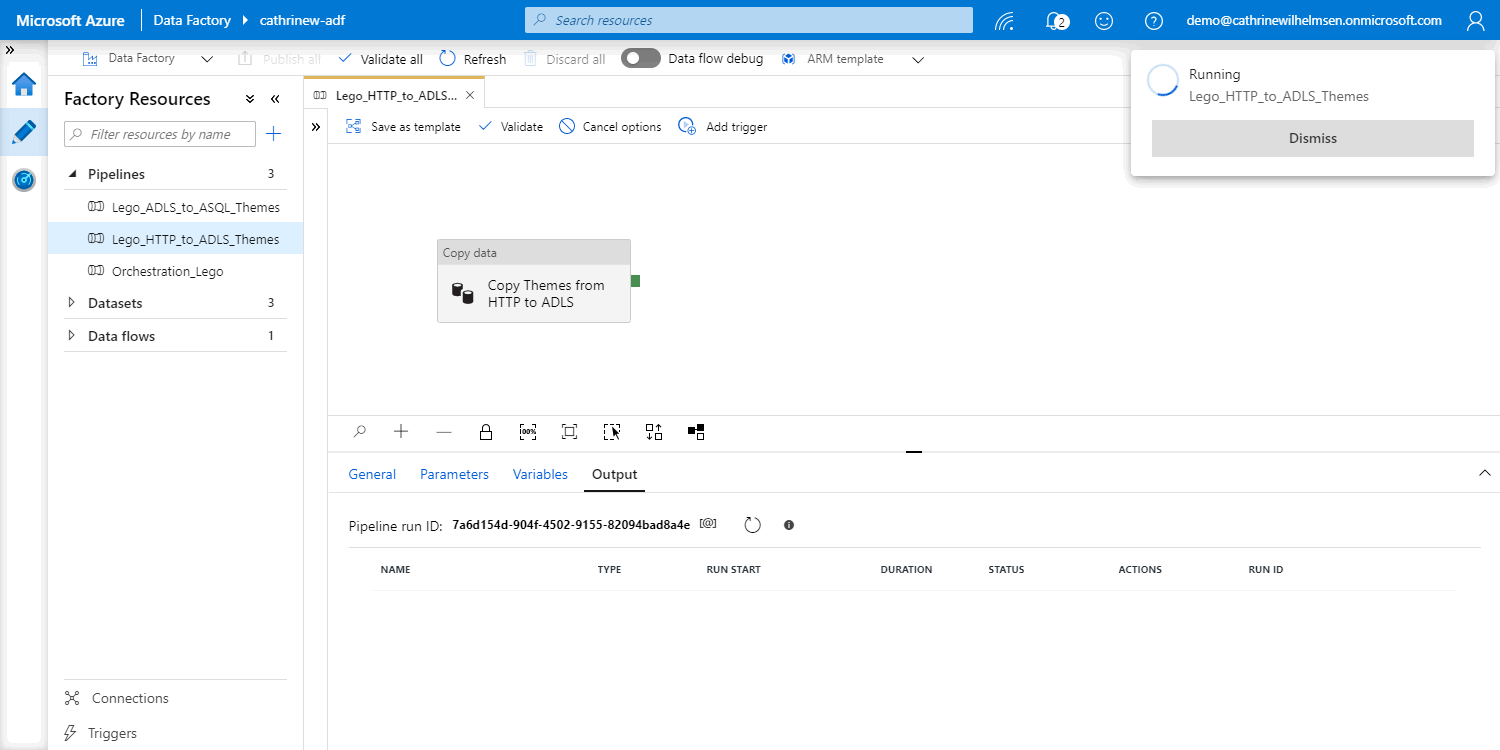

Then, it runs the pipeline. This opens the output pane where you will see the pipeline run ID and the current status. The status will be updated every 20 seconds for 5 minutes. After that, you have to manually refresh. The tab border also changes color to yellow, so you can see which pipelines are currently running:

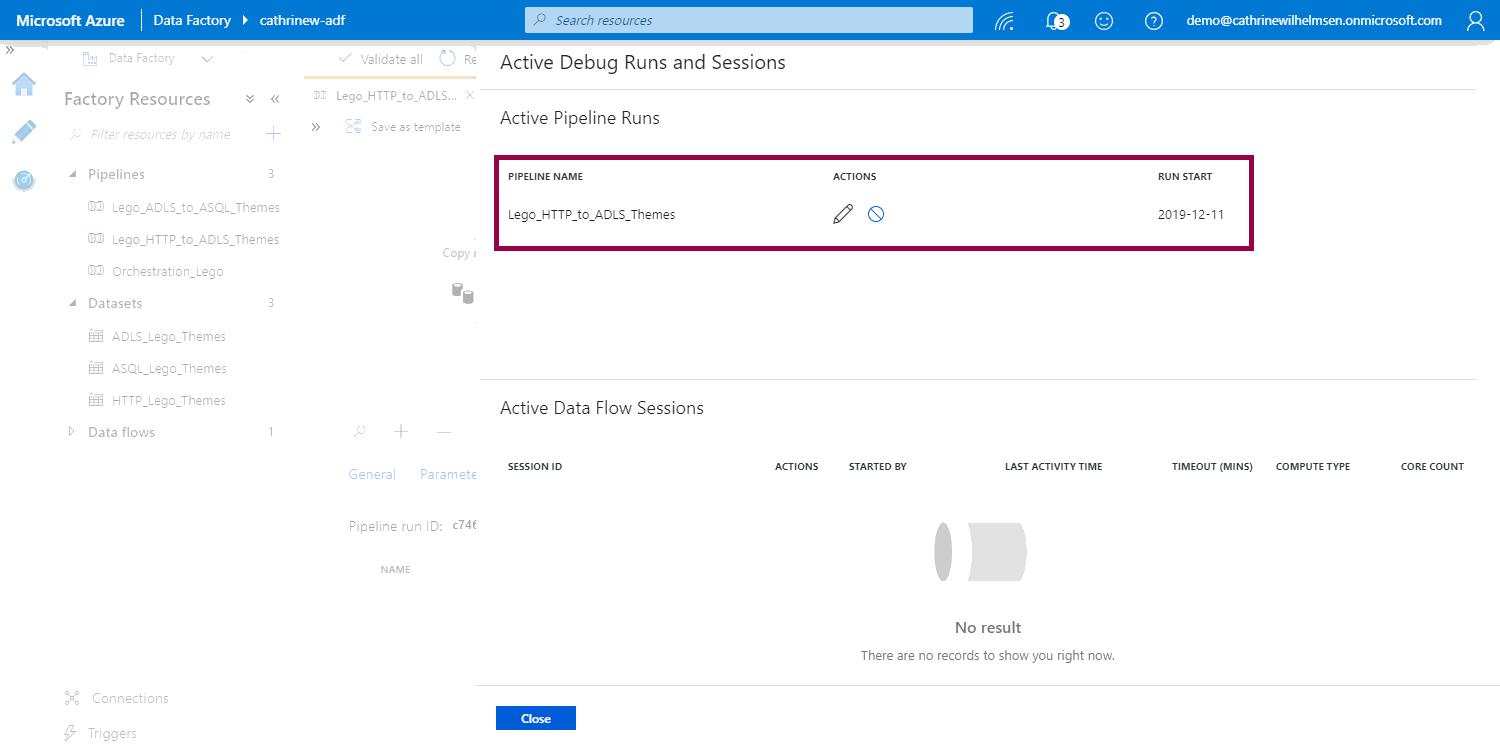

You can also open the active debug runs pane:

Here you can see all active pipeline runs:

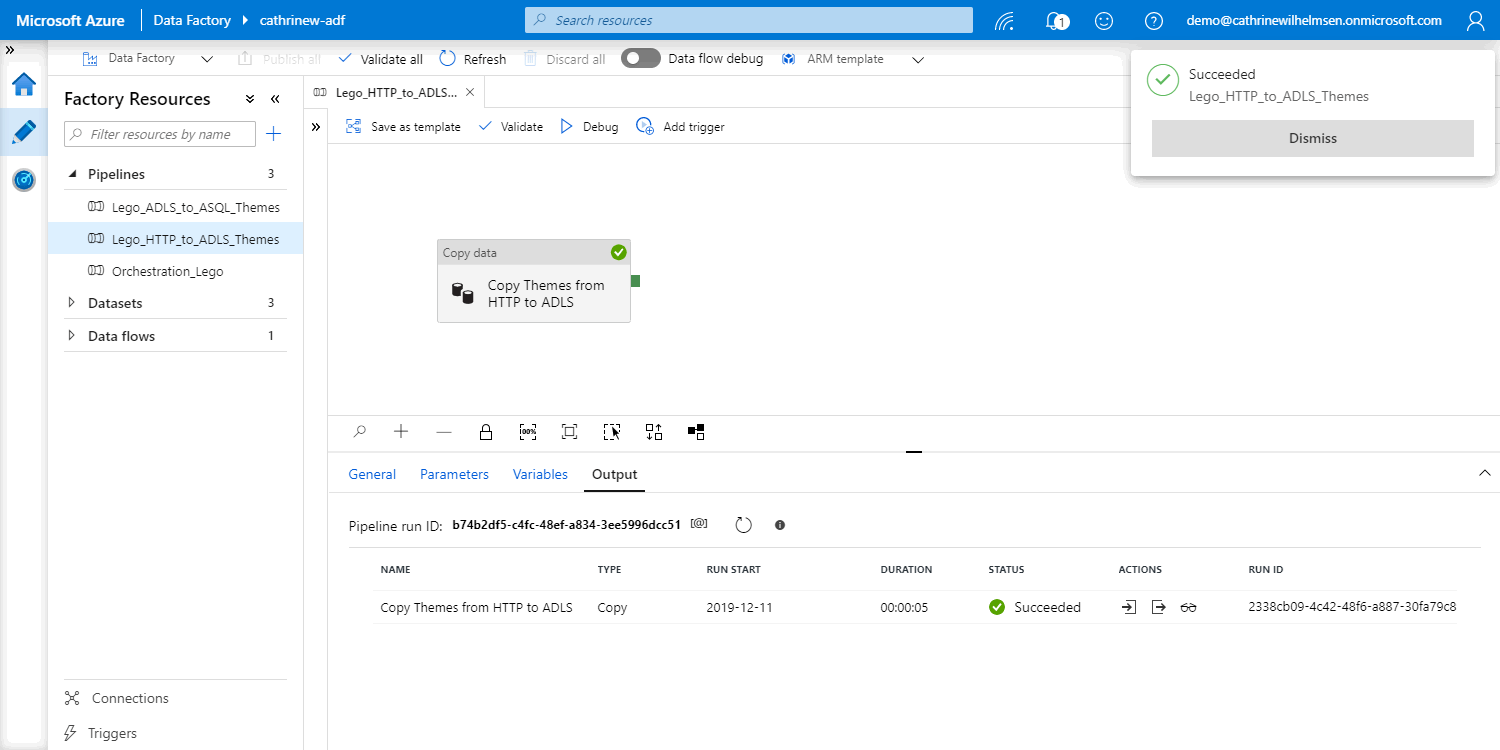

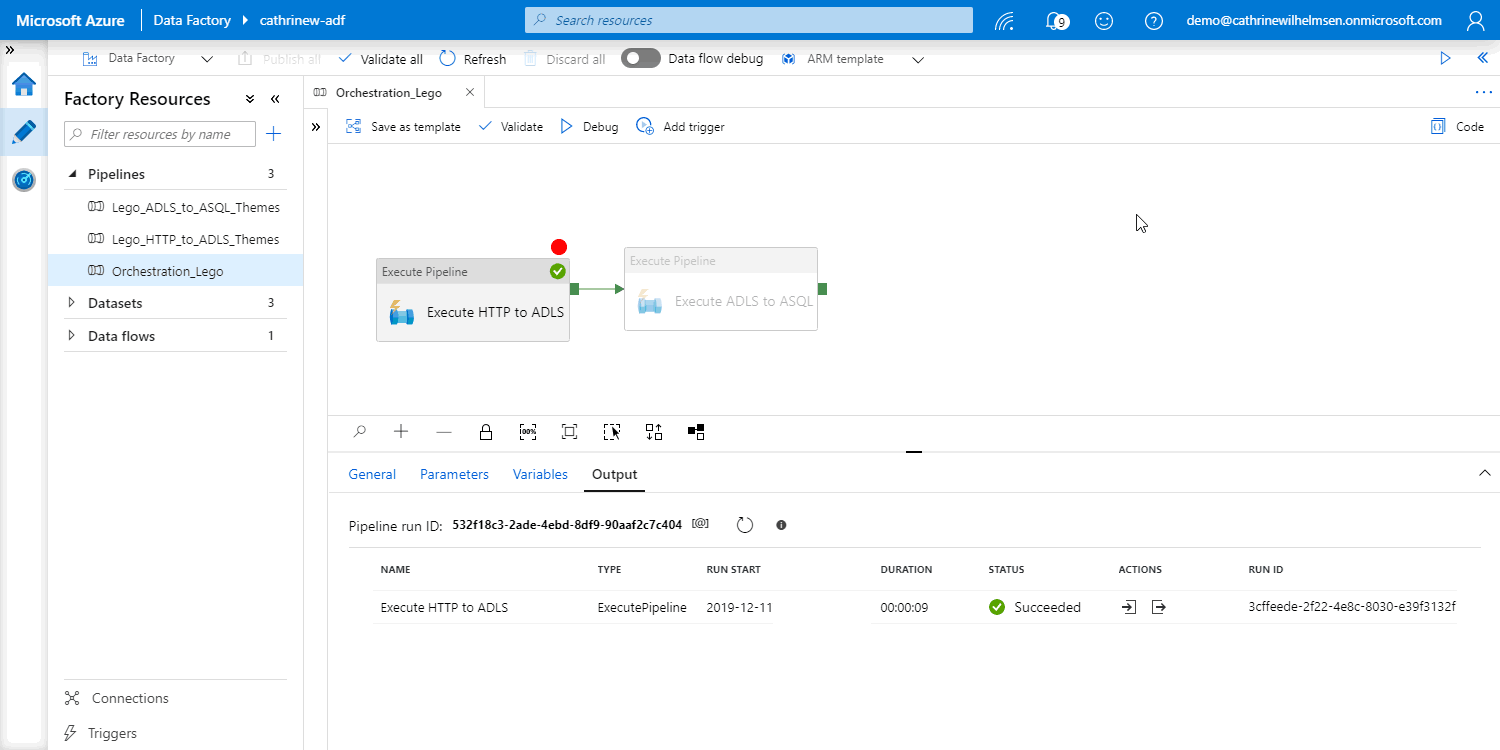

Once the pipeline finishes, you will get a notification, see an icon on the activity, and see the results in the output pane. Hopefully, everything is green and successful!

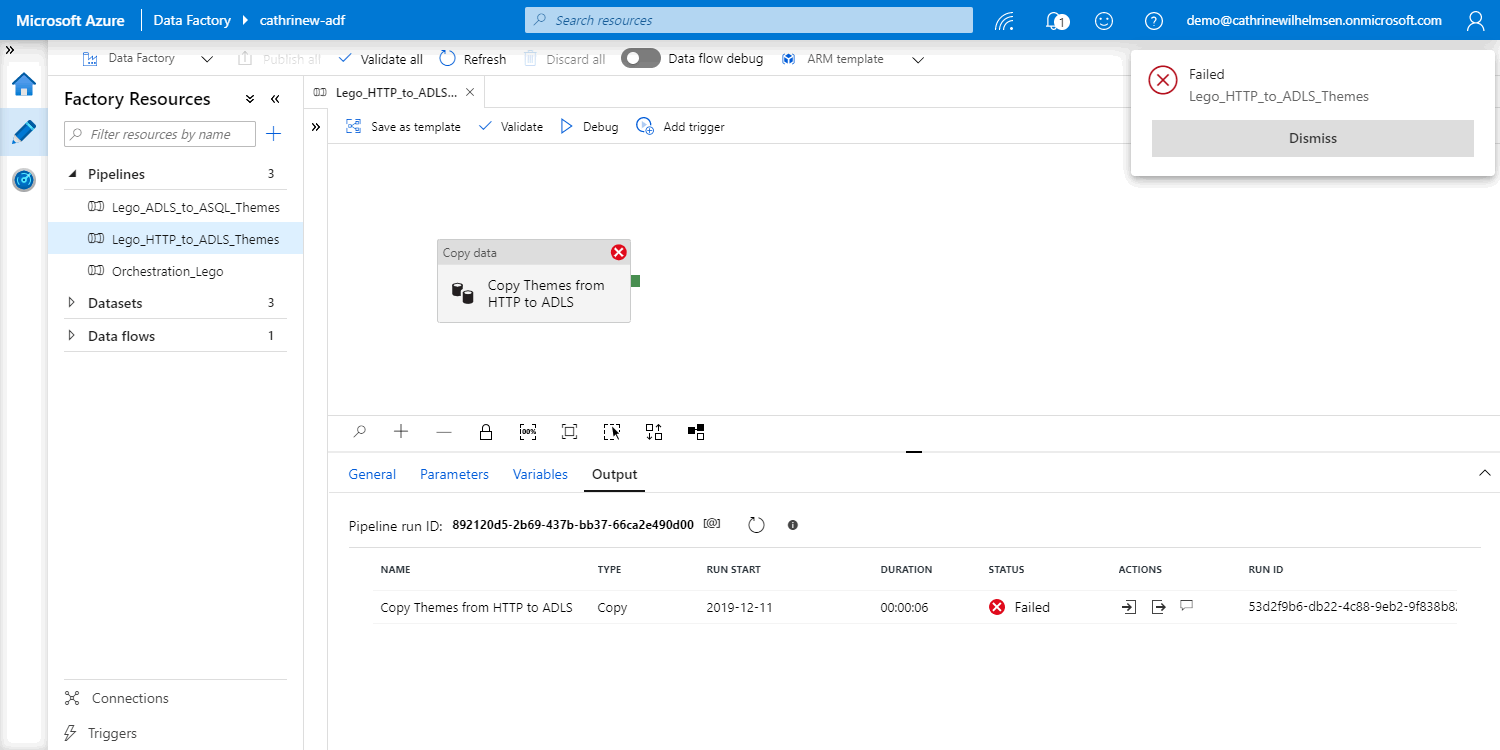

However, if everything is red and failed, that’s kind of good too. Because you would rather get errors during testing and debugging than in production 🤓

How do I view the details of a debug run?

In addition to the pipeline run ID, start time, duration, and status, you can view the details of the debug run. Click the action buttons in the output pane:

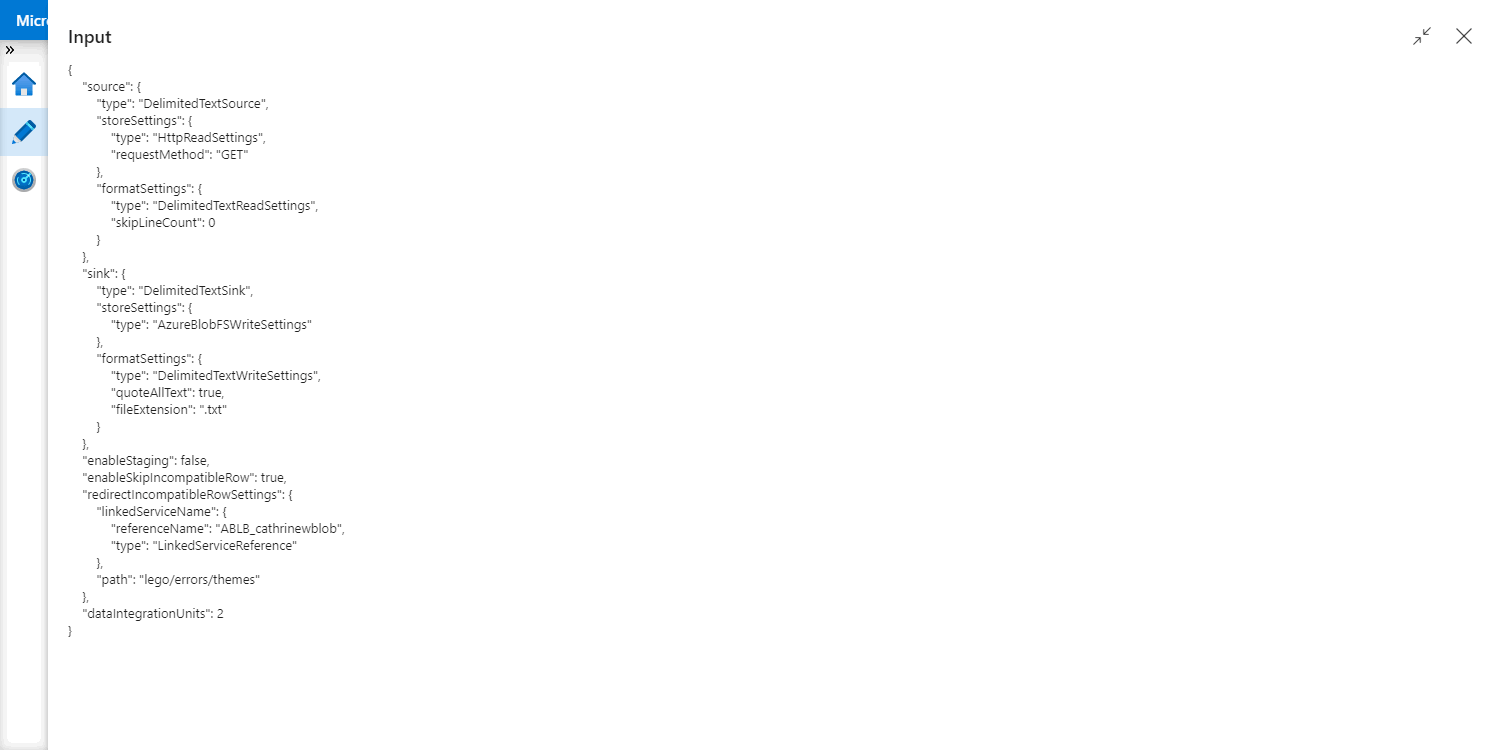

Input

Input will show you details about the activity itself - in JSON format. In this example, we recognize the settings from the copy data activity, including the number of data integration units used:

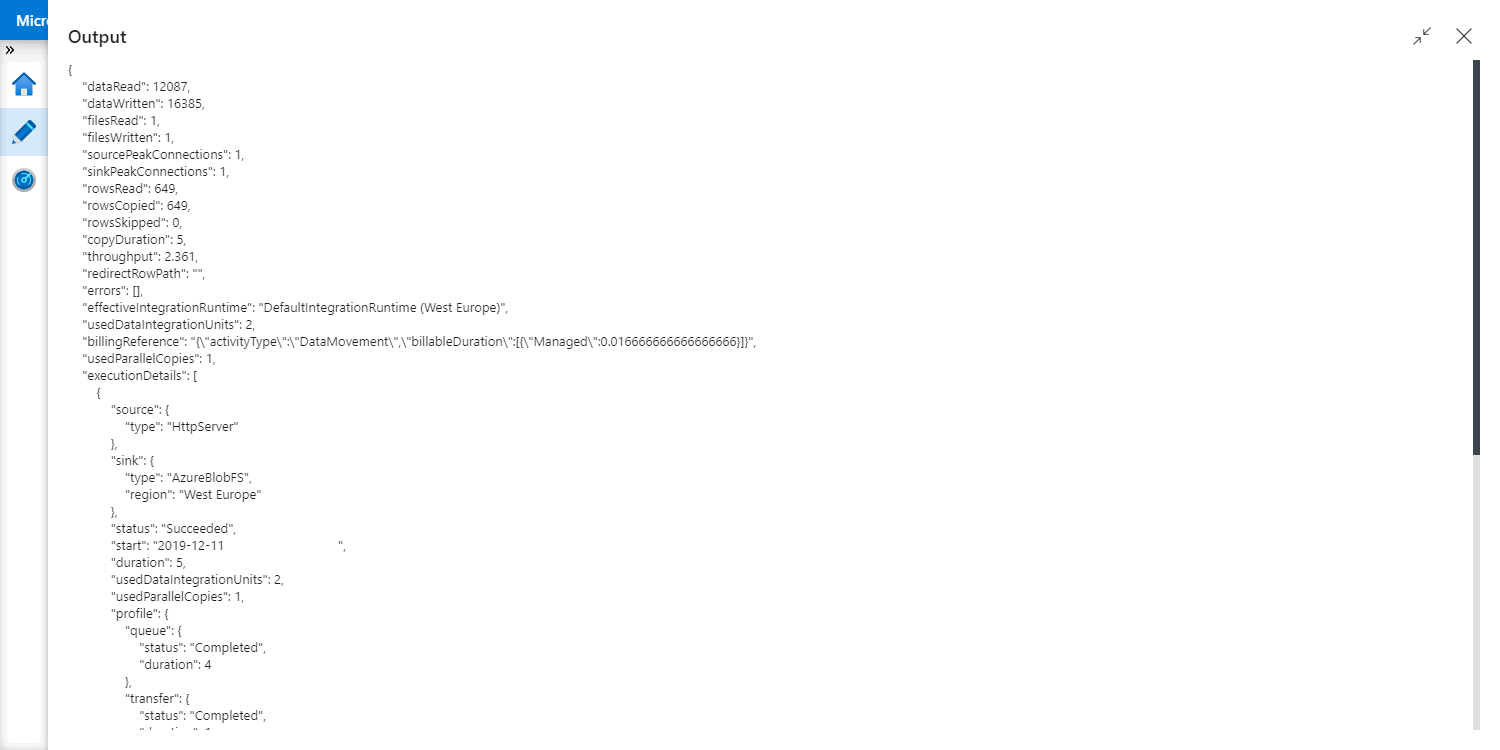

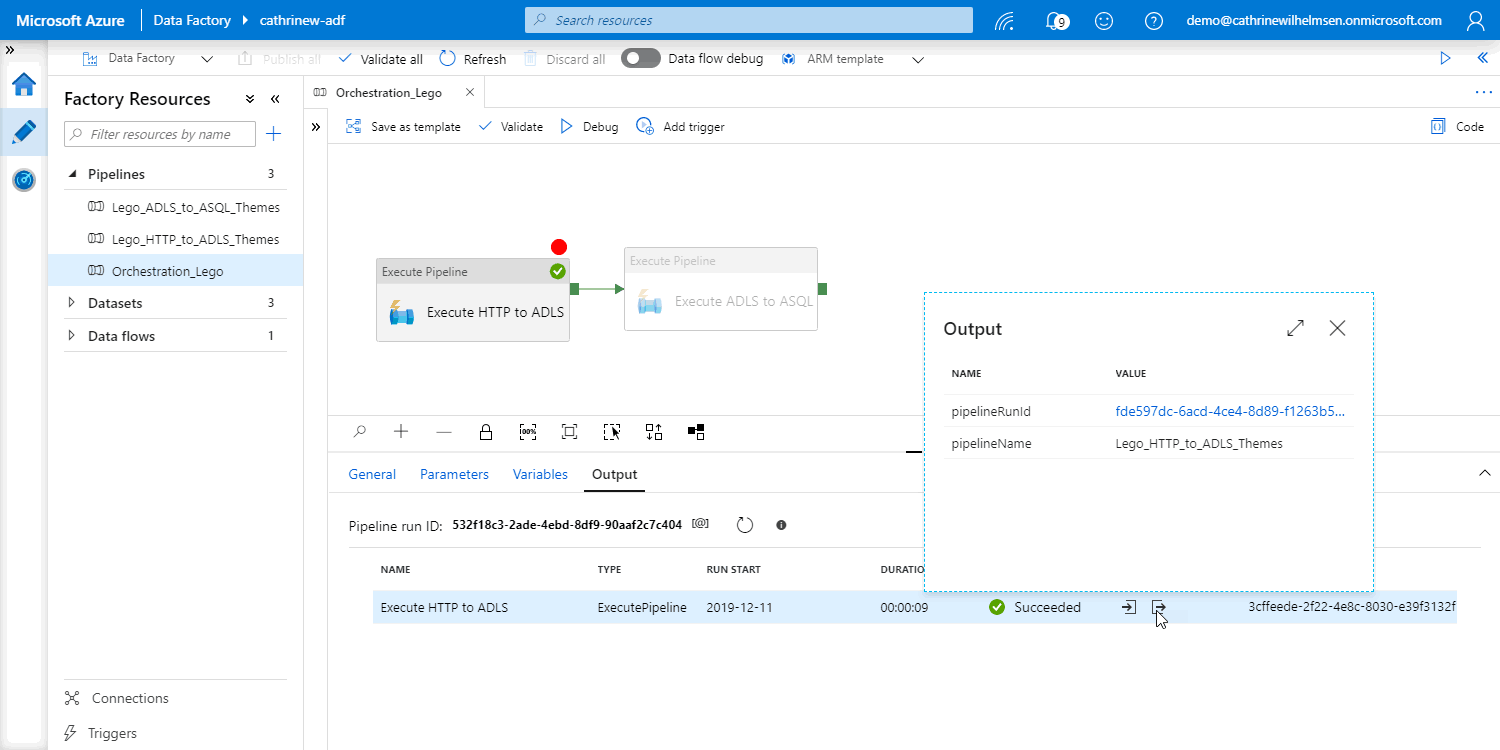

Output

Output will show you details about the execution - in JSON format. In this example, we see information such as how much data and how many rows were copied:

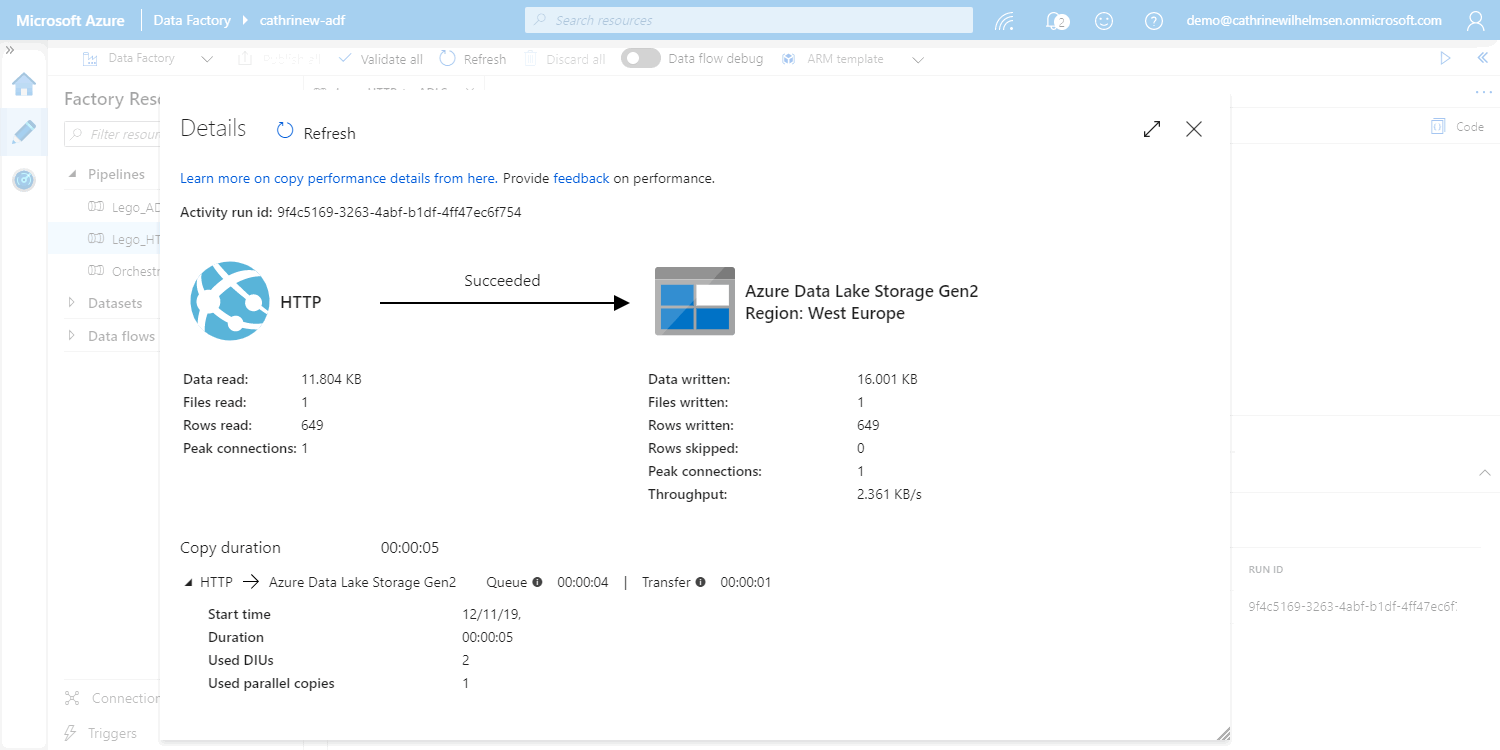

Details

Details will show you much of the same information as output, but in a visual interface. In this example, we see the source and sink type icons, as well as information about data and rows:

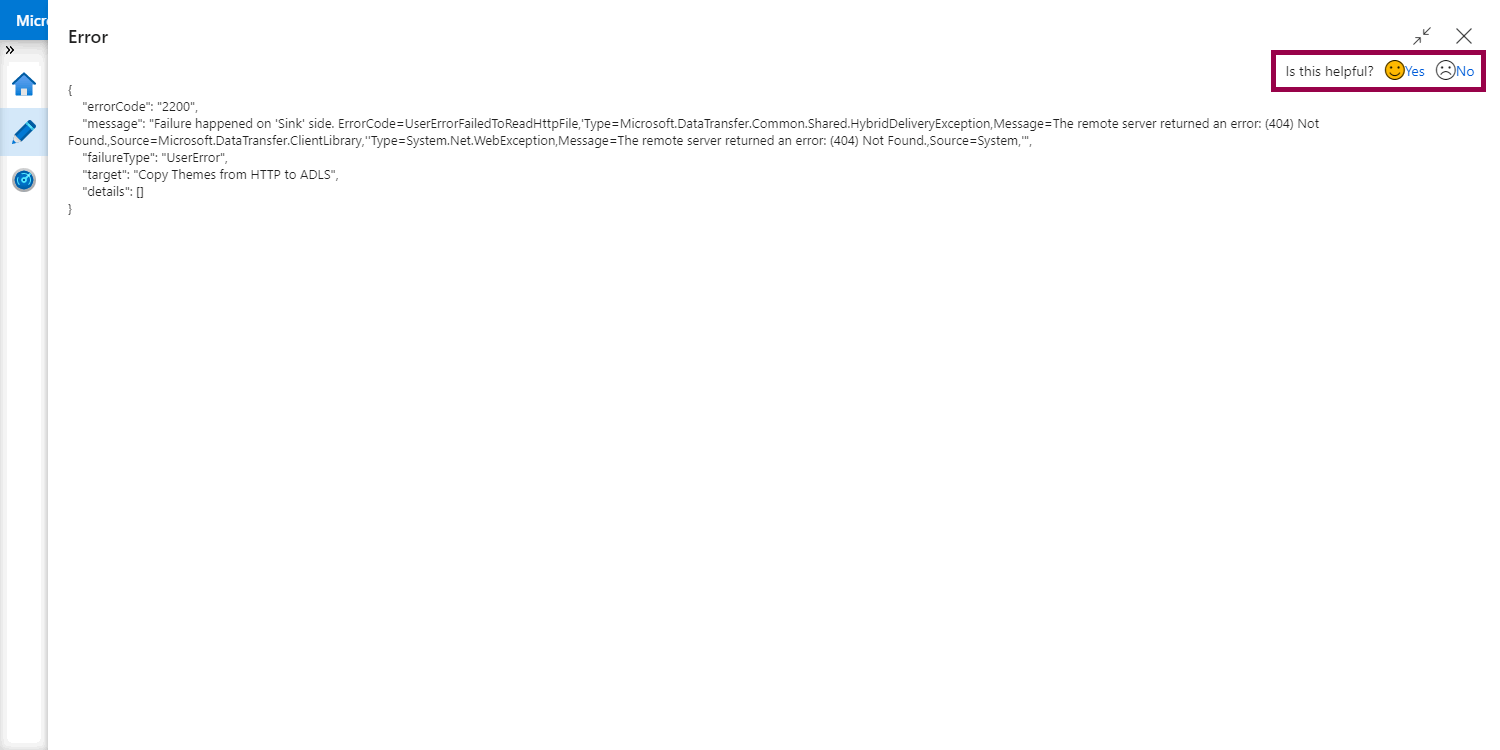

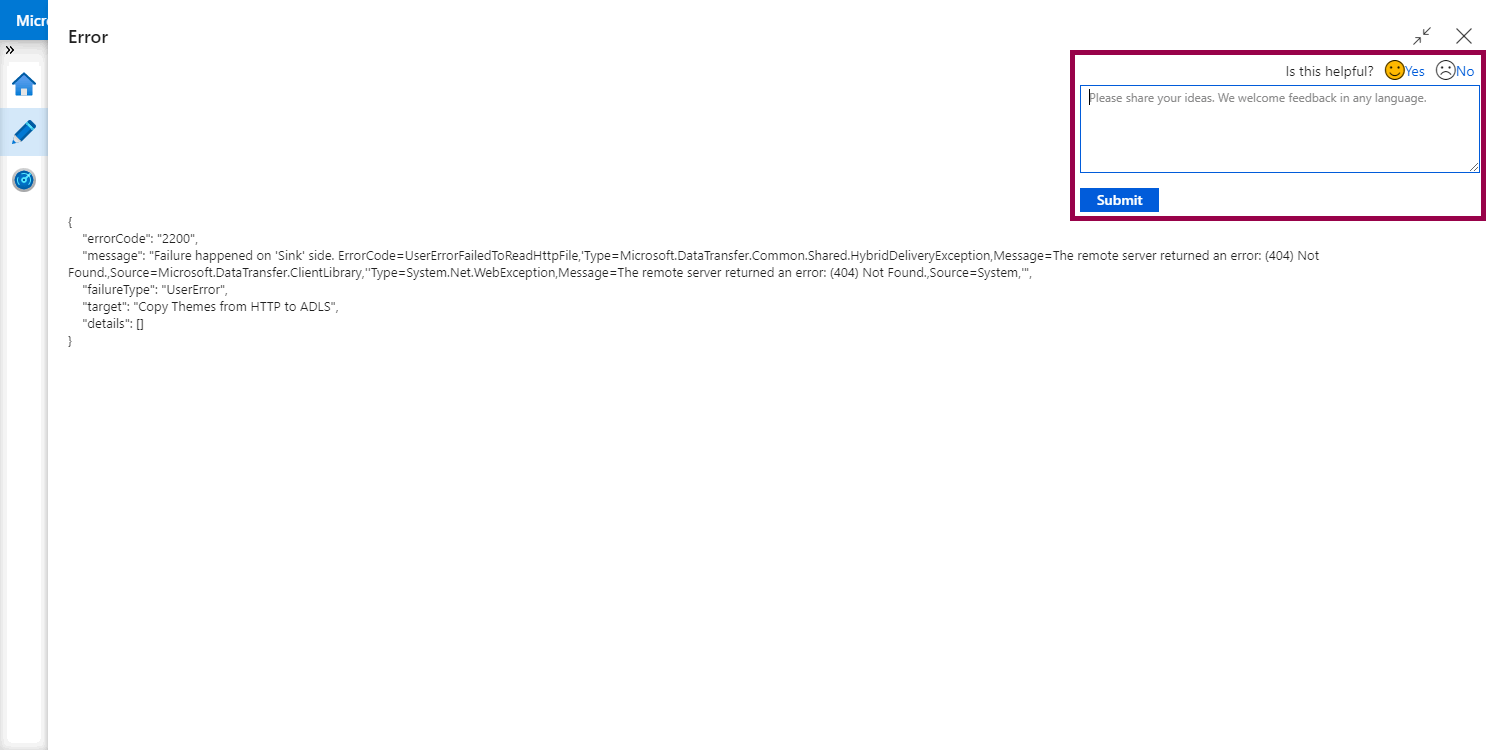

Error

Error will show you the error code and error message - in JSON format. You can also provide feedback on these messages, directly in the interface! Click the emojis:

Then write your message and click submit:

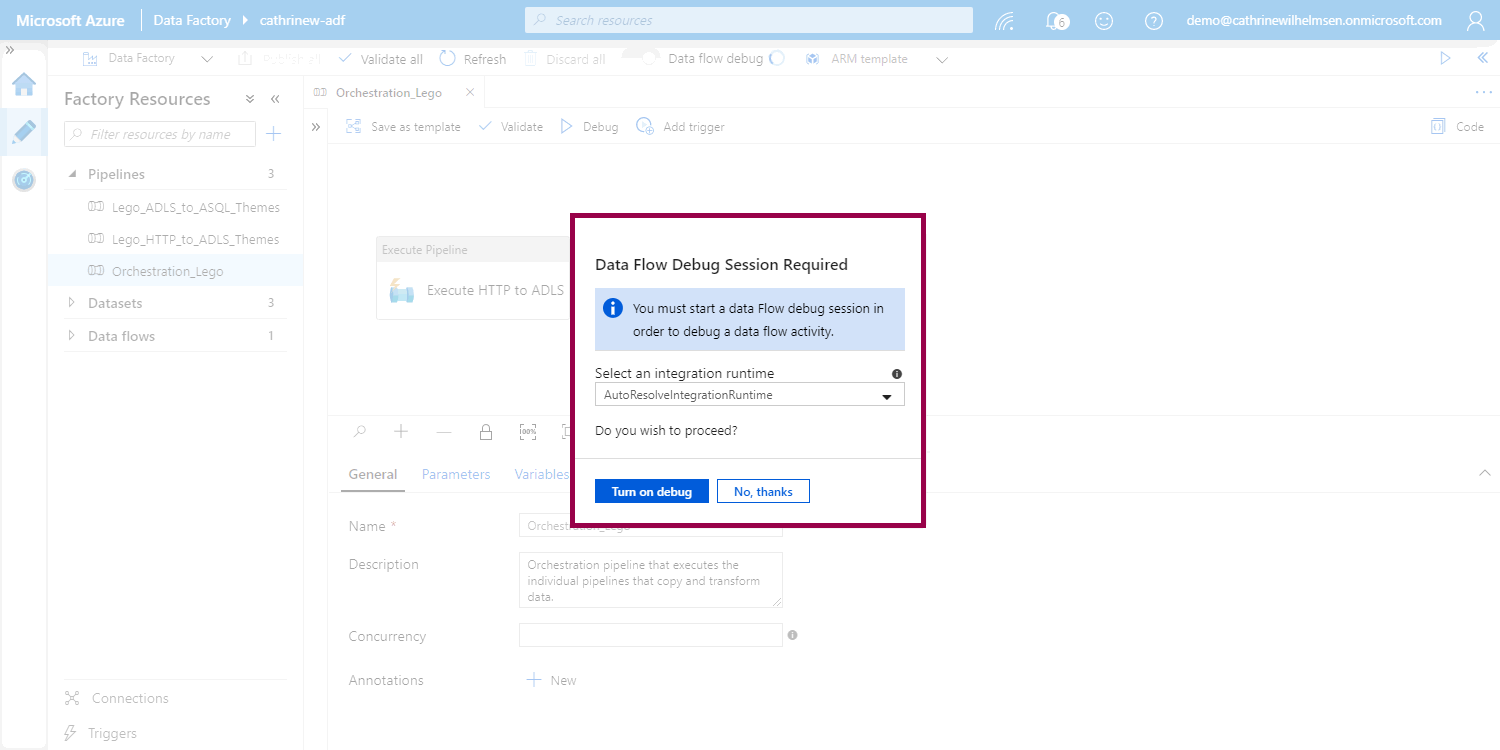

How do I debug data flows?

Debugging data flows is quite different from debugging pipelines. For example, it requires you to start a debug session. If we try to debug our orchestration pipeline, it will ask us to start a new session:

Now, I’m going to refer to smarter people than me again, just like I did in the data flows post 😅 You can read all the details about mapping data flows debug mode in the official documentation.

But this leads us to the next part of this post. What if we want to debug the orchestration pipeline without starting a debug session?

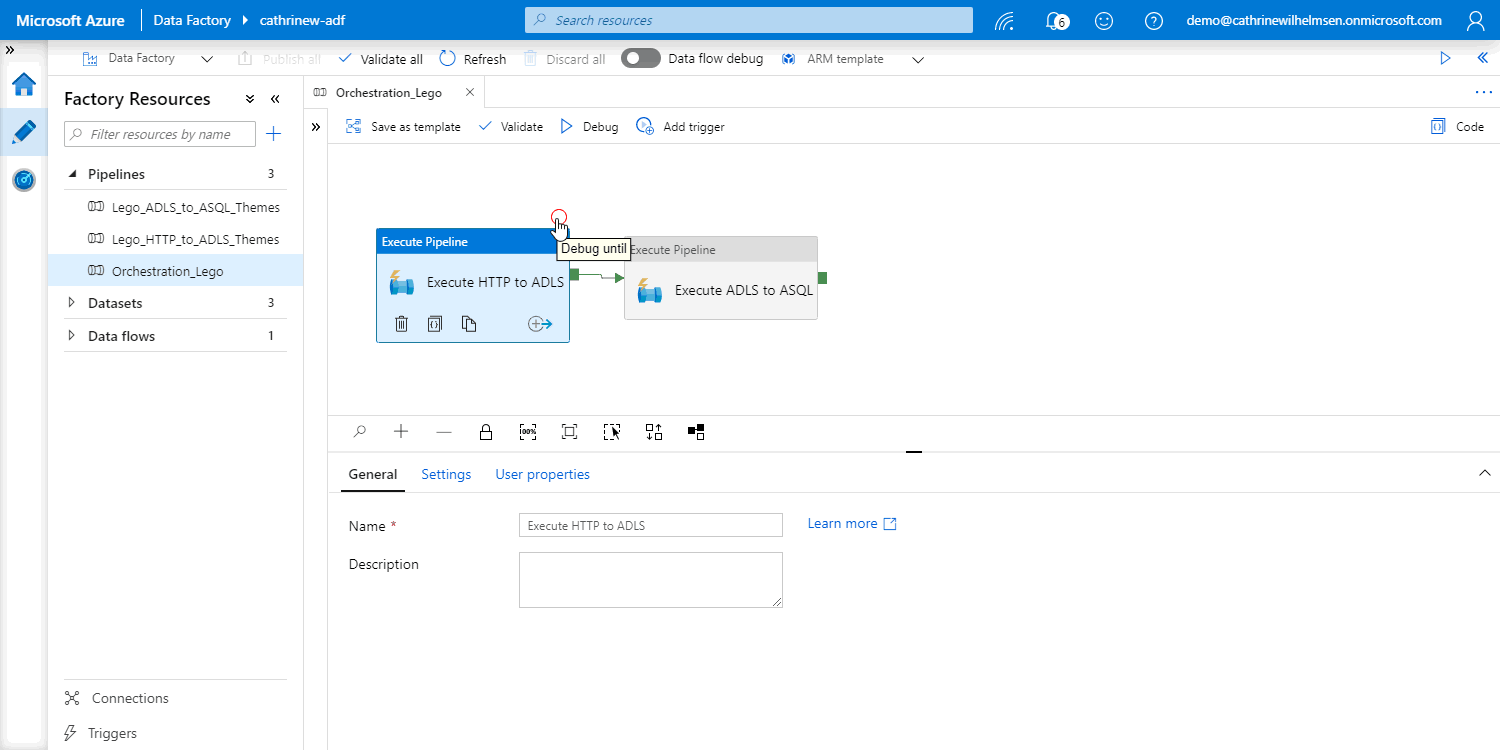

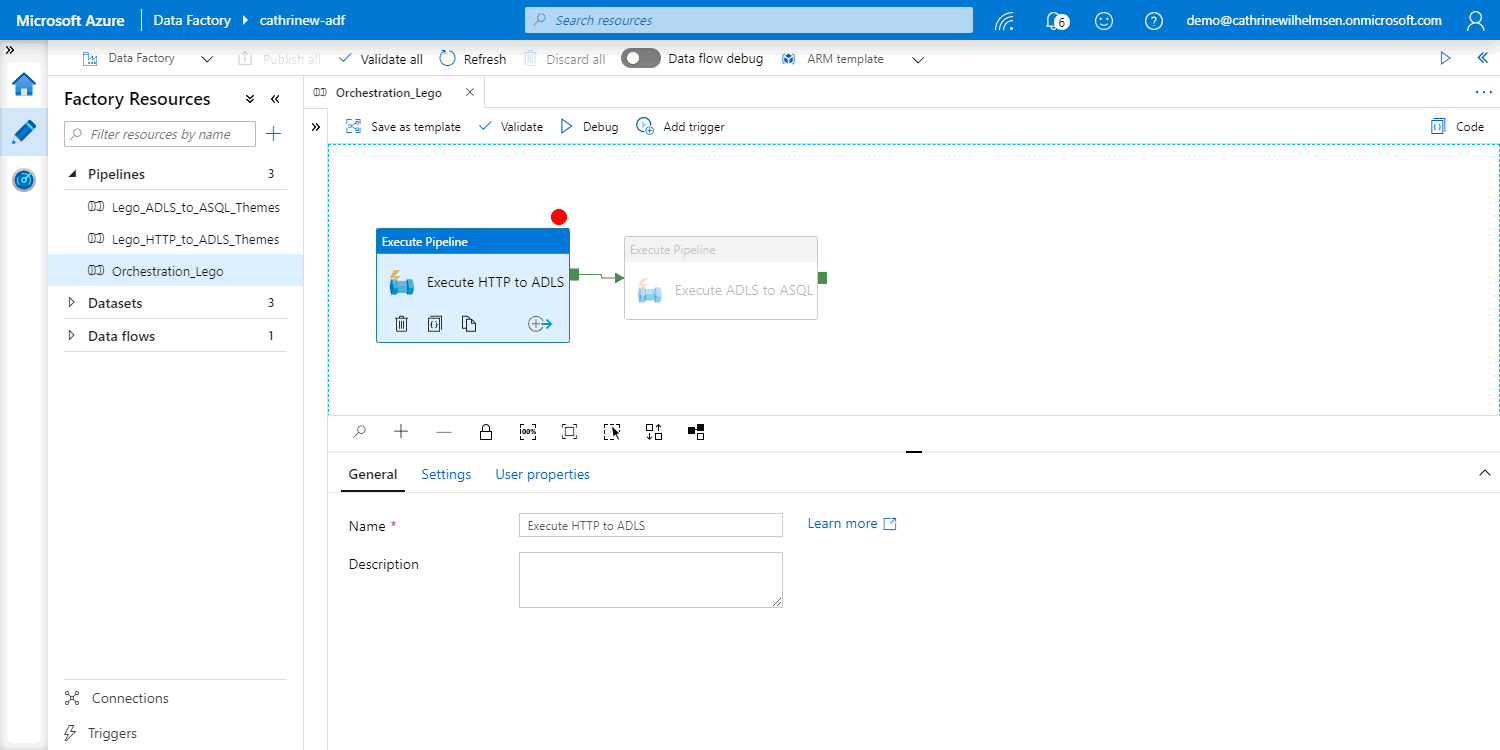

How do I debug specific activities?

In Azure Data Factory, you can set breakpoints on activities:

When you set a breakpoint, the activities after that breakpoint will be disabled:

You can now debug the pipeline, and only the activities up to and including the activity with the breakpoint will be executed:

As of right now, you can only debug until. There is no way to “debug from” or “debug single activity”. That’s why we separated our logic into individual pipelines 😊

Oh! One last thing:

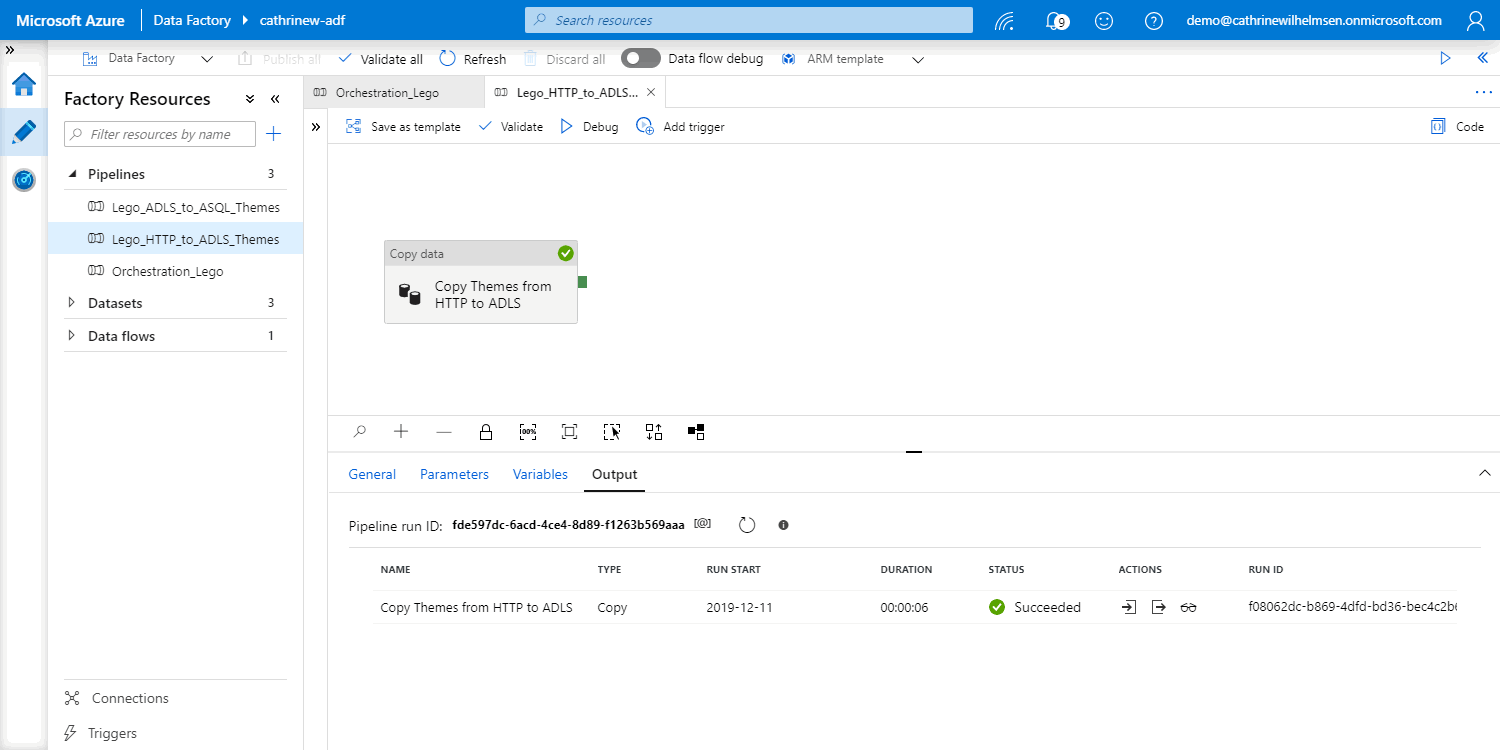

How do I debug nested pipelines?

When you debug pipelines with execute pipeline activities, you can click on output, then click on the pipeline run ID:

This opens the pipeline and shows you that specific pipeline run:

Summary

In this post, we looked at what happens when you debug a pipeline, how to see the debugging output, and how to set breakpoints. Once your debug runs are successful, you can go ahead and schedule your pipelines to run automatically. In the next post, we will look at triggers!

About the Author

Cathrine Wilhelmsen is a Microsoft Data Platform MVP, international speaker, author, blogger, organizer, and chronic volunteer. She loves data and coding, as well as teaching and sharing knowledge - oh, and sci-fi, gaming, coffee and chocolate 🤓

Cathrine Wilhelmsen is a Microsoft Data Platform MVP, international speaker, author, blogger, organizer, and chronic volunteer. She loves data and coding, as well as teaching and sharing knowledge - oh, and sci-fi, gaming, coffee and chocolate 🤓