Introduction to Azure Data Factory

Hi! I’m Cathrine 👋🏻 I really like Azure Data Factory. It’s one of my favorite topics, I can talk about it for hours. But talking about it can only help so many people - the ones who happen to attend an event where I’m presenting a session. So I’ve decided to try something new… I’m going to write an introduction to Azure Data Factory! And not just one blog post. A whole bunch of them.

I’m going to take all the things I like to talk about and turn them into bite-sized blog posts that you can read through at your own pace and reference later. I’ve named this series Beginner’s Guide to Azure Data Factory. You may not be new to ETL, data integration, Azure, or SQL, but we’re going to start completely from scratch when it comes to Azure Data Factory.

Does that sound good? Are you in? Cool. Let’s go!

(Oh, one last thing before we get started… I’ll be writing these posts as if we were talking to each other. That means I often casually explain things, I’ll probably ramble, I’ll definitely use a lot of emojis, and I may throw in a silly joke here and there. If you prefer short, technical explanations, hop on over to the official documentation! It’s good. Read it anyway.)

(Oh! And one last last thing. In September 2020, an official Azure Data Factory Learning Path was released on Microsoft Learn, yaaay! I’m 100% sure this will be more updated than my series, because, you know, it’s their job and I’m trying to keep all these posts updated on my free time. So! Make sure you go through that as well!)

Ok, now let’s go! 🤓

Introduction to Azure Data Factory

What is at the core of every Business Intelligence, Data Science, and Machine Learning project?

Data.

You need data to understand what has happened in the past, to predict what may happen in the future, to discover patterns and anomalies, and to gain the insight necessary for making faster and better decisions.

But before you can do any of those things, you need to collect, store, transform, integrate, and prepare your data.

That can be a pretty big and time-consuming task, right? I don’t know about you, but I imagine that someone, at some point, got a brilliant idea. Probably something along the lines of “hey, this is taking a lot of time… wouldn’t it be cool if we could do all of those things automatically, like, in a data factory or something?”

What is Azure Data Factory?

Before we look at what Azure Data Factory is today, let’s take a quick look at its history.

Azure Data Factory v1

Azure Data Factory went into public preview on October 28th, 2014, and became generally available on August 6th, 2015. Back then, it was a fairly limited tool for processing time-sliced data. It did that part really well, but it couldn’t even begin to compete with the mature and feature-rich SQL Server Integration Services (SSIS). In the early days of Azure Data Factory, you developed solutions in Visual Studio, and even though they made improvements to the diagram view, there was a lot of JSON editing involved. It was a very different world just a few years ago.

But then! Something happened at Microsoft Ignite 2017.

Azure Data Factory v2

Azure Data Factory v2 went into public preview on September 25th, 2017. It was branded as v2 because it had so many new features and capabilities that it was almost a new product. You could now lift and shift your existing SQL Server Integration Services (SSIS) solutions to Azure. But more importantly, you could now do amazing things like looping and branching and even run pipelines on a wall-clock schedule in addition to regular intervals. WHOA! (Yeah, we can giggle about this now, but back then it was a huge announcement 😅 I even got to interview Mike Flasko and Sanjay Krishnamurthi about these new updates. Good times!) Azure Data Factory v2 became even better when the new visual tools were enabled in public preview on January 16th, 2018. DRAG AND DROP, YEAH! All these new, shiny features became generally available on June 27th, 2018.

(Note to self: I need to figure out when to celebrate Azure Data Factory’s birthday… 🤔 )

Azure Data Factory = Azure Data Factory v2

This means that today, when I talk about “Azure Data Factory”, I refer to “Azure Data Factory v2” and skip the “v2” part of the name. I mostly pretend that Azure Data Factory v1 doesn’t exist anymore 😅

So!

What is Azure Data Factory?

Azure Data Factory (ADF) is a hybrid data integration service that enables you to quickly and efficiently create automated data pipelines - without having to write any code!

That was short and to-the-point, huh? Maybe I should have skipped the history lesson. But I had too much fun digging through the archives 😂

What can you do in Azure Data Factory?

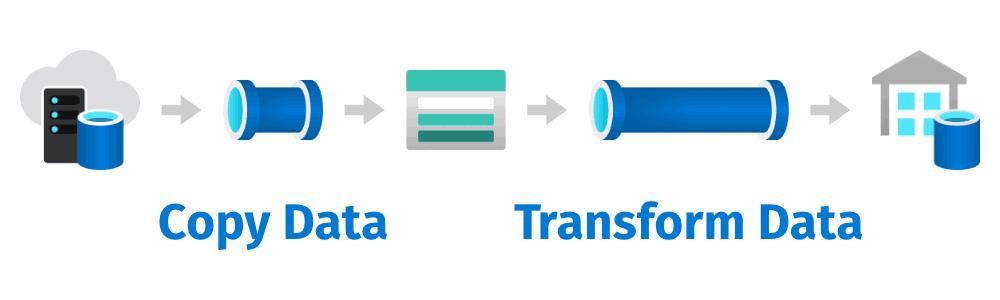

You can do many things in Azure Data Factory. I like to simplify it to two main tasks. You can copy data and you can transform data. Both of these tasks can be automated and scheduled.

Copy Data

Copying (or ingesting) data is the core task in Azure Data Factory. You can copy data to and from more than 90 Software-as-a-Service (SaaS) applications (such as Dynamics 365 and Salesforce), on-premises data stores (such as SQL Server and Oracle), and cloud data stores (such as Azure SQL Database and Amazon S3). During copying, you can even convert file formats, zip and unzip files, and map columns implicitly and explicitly - all in one task.

Yeah. It’s powerful 🤩

Transform Data

In addition to copying data, you can also transform data. Previously, the only way to do this was to use external services like Azure HDInsight or SQL Server Stored Procedures. But in 2019, Azure Data Factory completed the data integration story by adding new data transformation capabilities called Data Flows. Now you can both copy and transform data in the same user interface, making Azure Data Factory a complete ETL and data integration tool.

Awesome! 🥳

Summary

In this introduction to Azure Data Factory, we looked at what Azure Data Factory is and what its use cases are. After digging through some history to see how it has evolved and improved from v1 to v2, we looked at its two main tasks: copying and transforming data.

In the rest of the Beginner’s Guide to Azure Data Factory, we will go through how to do both of these things - and more. But before we can do that, let’s start by creating an Azure Data Factory!

About the Author

Cathrine Wilhelmsen is a Microsoft Data Platform MVP, international speaker, author, blogger, organizer, and chronic volunteer. She loves data and coding, as well as teaching and sharing knowledge - oh, and sci-fi, gaming, coffee and chocolate 🤓

Cathrine Wilhelmsen is a Microsoft Data Platform MVP, international speaker, author, blogger, organizer, and chronic volunteer. She loves data and coding, as well as teaching and sharing knowledge - oh, and sci-fi, gaming, coffee and chocolate 🤓